Introduction: AI Broke the Old Privacy Model

For years, data privacy programs were built around relatively stable systems: databases, applications, user inputs, and clearly defined processing purposes. Compliance focused on documentation, access control, and breach response.

AI changed that.

In 2026, It is no longer a standalone experiment. It is embedded across marketing, customer support, product development, analytics, HR, and decision-making systems. As a result, traditional privacy frameworks are no longer sufficient.

It doesn’t just process data differently it changes what data is used, how it is interpreted, and how long its influence persists. That reality is forcing organizations to rethink privacy from the ground up.

Why Traditional Privacy Frameworks Are Failing

1. AI Uses Data Indirectly, Not Just Explicitly

Classic privacy models assumed a direct relationship:

- Data collected → Data processed → Outcome delivered

Artificial Intelligence break this chain.

Artificial Intelligence:

- Learns patterns from historical data

- Infers new information not explicitly provided

- Makes probabilistic decisions

- Applies learning across future interactions

This means organizations may impact users without actively processing their data again a scenario many existing privacy policies never anticipated.

2. Training Data Creates Long-Term Risk

In traditional systems, deleting data often ended the risk.

With AI, that’s no longer true.

Once personal or sensitive data influences:

- Model weights

- Behavioral patterns

- Decision logic

The impact can persist long after the original data is deleted.

This raises hard questions regulators are now asking:

- Can models “forget” data?

- How do you honor deletion requests?

- What constitutes ongoing processing?

Old answers no longer work.

3. Artificial Intelligence Blurs the Line Between Data Use and Profiling

Many systems perform advanced profiling by default:

- Behavioral prediction

- Risk scoring

- Personalization

- Automated recommendations

Under modern regulations, this often triggers:

- Higher consent thresholds

- Transparency obligations

- User rights around automated decision-making

Organizations using tools even third-party ones are increasingly responsible for explaining how decisions are made, not just that data is processed.

Regulators Are Shifting Focus Because of Artificial Intelligence

The regulatory response to it is not just new laws it’s how existing privacy laws are enforced.

In 2026, regulators are prioritizing:

- Real-world data usage

- Operational safeguards

- Evidence of privacy-by-design

- Accountability at leadership level

Artificial Intelligence has exposed the weakness of “paper compliance” policies that look good but don’t reflect reality.

Key Privacy Pressure Points Introduced by Artificial Intelligence

1. Data Minimization Is Now Critical

This systems often tempt teams to collect “as much data as possible” to improve performance.

That approach is now dangerous.

Regulators are asking:

- Why is each data point necessary?

- Could the system function with less data?

- Is historical data still justified?

In AI-driven environments, data hoarding increases risk without guaranteed benefit.

2. Consent Becomes Harder to Justify

Obtaining valid consent for Artificial Intelligence use is more complex because:

- Future uses may not be fully known

- Models evolve over time

- Secondary use is common

Vague or blanket consent no longer holds up.

Organizations must now:

- Be precise about Artificial Intelligence purposes

- Re-evaluate consent as systems evolve

- Avoid bundling unrelated data uses

Artificial Intelligence forces consent to become dynamic, not one-time.

3. Third-Party Artificial Intelligence Tools Expand Your Risk Surface

Many companies don’t build Artificial Intelligence they integrate it.

That doesn’t reduce responsibility.

Using Artificial Intelligence platforms, APIs, or copilots introduces questions around:

- Data sharing

- Model training on customer data

- Sub-processing chains

- Cross-border transfers

In 2026, “the vendor handles it” is no longer a defensible privacy position.

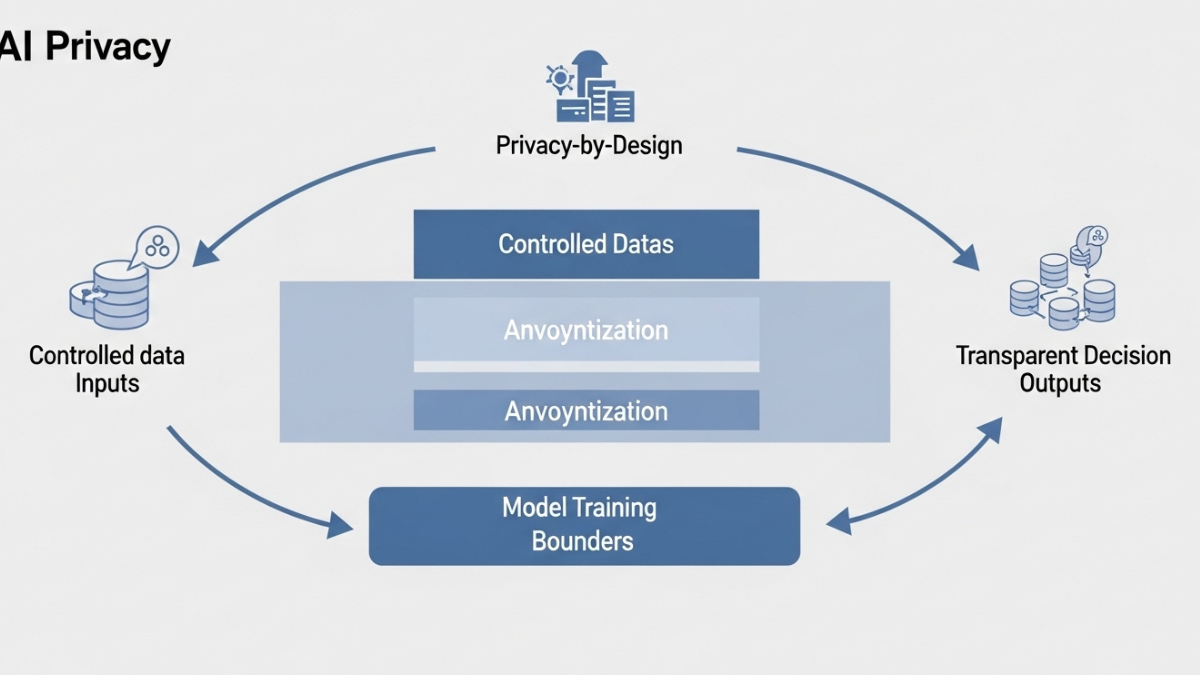

Privacy-by-Design Is No Longer Optional

Artificial Intelligence’s adoption has accelerated the shift from reactive compliance to privacy-by-design.

This means:

- Assessing privacy impact before Artificial Intelligence deployment

- Limiting training data by default

- Applying anonymization and pseudonymization

- Designing models with explainability in mind

Privacy must be embedded at:

- Architecture level

- Model selection stage

- Data pipeline design

Retrofitting controls after deployment is too late and increasingly penalized.

The New Data Privacy Playbook for Artificial Intelligence

1. Treat Artificial Intelligence Systems as Ongoing Processing Activities

Privacy assessments should no longer be “set and forget.”

Artificial Intelligence’s systems require:

- Continuous monitoring

- Periodic reassessment

- Clear ownership

If the model evolves, the privacy assessment must evolve with it.

2. Separate Model Training from User Interaction Data

Where possible:

- Avoid training on live customer data

- Use synthetic or anonymized datasets

- Strictly control feedback loops

This reduces long-term exposure and simplifies compliance obligations.

3. Strengthen Transparency Without Over-Promising

Organizations must explain Artificial Intelligence usage honestly:

- What data is used

- What decisions are automated

- What safeguards exist

Over-simplification is risky. So is technical obfuscation.

Clear, accurate communication builds trust and reduces enforcement risk.

4. Assign Clear Accountability

Artificial Intelligence privacy failures are increasingly treated as governance failures.

Best-practice organizations:

- Assign Artificial Intelligence oversight roles

- Involve legal, security, and product teams early

- Ensure leadership visibility

Artificial Intelligence privacy is no longer just a DPO concern. It’s an executive one.

What This Means for Businesses in 2026

Artificial Intelligence adoption is accelerating but so is scrutiny.

Organizations that:

- Deploy Artificial Intelligence without privacy strategy

- Rely on outdated consent models

- Ignore training data implications

are accumulating regulatory and reputational risk.

Those that adapt their privacy playbook gain:

- Faster Artificial Intelligence adoption with fewer blockers

- Stronger user trust

- Lower enforcement exposure

- Better long-term scalability

Privacy maturity is becoming a competitive advantage.

Final Thoughts: Artificial Intelligence Forces Honesty in Privacy

Artificial Intelligence has removed the illusion that privacy can be managed through paperwork alone.

In 2026, data privacy is about:

- How systems actually behave

- How decisions are made

- How long data influence persists

- Who is accountable when things go wrong

Artificial Intelligence didn’t make privacy harder it made weak privacy strategies visible.

Organizations that respond with discipline, transparency, and design-level controls will thrive. Those that don’t will spend years reacting to audits, fines, and trust erosion.

The new data privacy playbook isn’t optional.

It’s the cost of doing Artificial Intelligence responsibly.

For more details Contact Us