Introduction: Why Machine Learning Is Leaving the Cloud

For years, machine learning followed a simple pattern: collect data, send it to the cloud, run models, return predictions. That approach worked until scale, latency, cost, and privacy got in the way.

In 2026, a growing number of ML systems are breaking this pattern. Instead of sending data to distant servers, models are moving closer to where data is generated on devices, sensors, gateways, and embedded systems. This shift is known as Edge AI, and it’s changing how machine learning is built, deployed, and scaled.

Edge AI isn’t a replacement for cloud ML. It’s a response to real constraints that cloud-first architectures can’t always solve.

What Is Edge AI?

Edge AI refers to running machine learning models at or near the source of data, rather than in centralized cloud environments.

That “edge” can be:

- Smartphones and tablets

- IoT sensors and cameras

- Industrial machines

- Vehicles and robots

- Retail devices and kiosks

- On-premise gateways

In Edge AI, data is processed locally. The model runs on the device (or nearby), and only essential information if any is sent to the cloud.

Why Edge AI Exists: The Core Drivers

1. Latency Matters

Some decisions must happen instantly. Sending data to the cloud and waiting for a response introduces delay that’s unacceptable for:

- Autonomous vehicles

- Robotics

- Industrial safety systems

- Real-time fraud detection

- Smart manufacturing

Edge AI enables millisecond-level decisions without round trips to the cloud.

2. Bandwidth and Cost Constraints

Streaming raw data especially video, audio, or sensor data is expensive.

Edge AI:

- Reduces data transfer

- Lowers cloud processing costs

- Scales better for high-volume data sources

Instead of uploading everything, devices process data locally and send only what matters.

3. Privacy and Compliance

In many industries, data cannot freely leave its environment.

It helps with:

- Data sovereignty

- Privacy-by-design

- Regulatory compliance (healthcare, finance, public sector)

By keeping sensitive data on-device, organizations reduce exposure and risk.

4. Reliability and Offline Operation

Cloud connectivity isn’t guaranteed.

It allows systems to:

- Operate offline

- Continue functioning during outages

- Maintain safety and reliability

This is critical in remote locations, factories, and transportation systems.

How Edge AI Works (At a Basic Level)

From a machine learning fundamentals perspective, It still relies on the same core concepts:

- Data

- Models

- Training

- Inference

The difference lies in where inference happens.

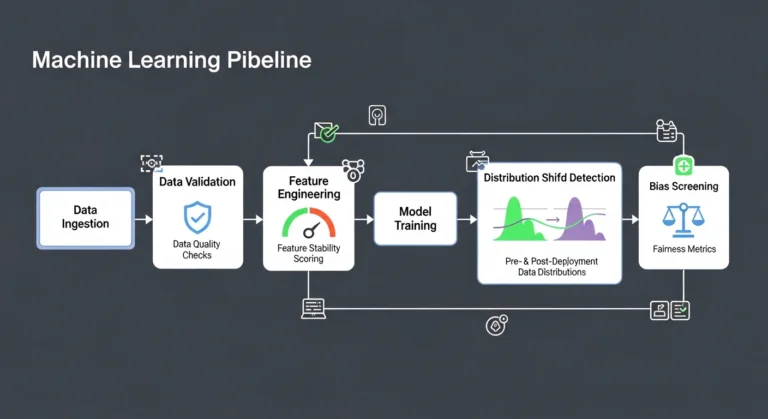

Typical workflow:

- Model is trained centrally (often in the cloud)

- Model is optimized and compressed

- Model is deployed to edge devices

- Inference runs locally

- Optional feedback or updates are sent back

Training usually remains centralized. Inference moves to the edge.

Key Machine Learning Basics Behind Edge AI

Model Optimization

Edge devices have limited:

- Memory

- Compute power

- Energy

To run efficiently, models must be:

- Smaller

- Faster

- Less power-hungry

Common techniques include:

- Quantization

- Pruning

- Knowledge distillation

These techniques are core ML skills becoming increasingly important.

Feature Engineering at the Edge

It often relies on simpler, well-defined features rather than massive raw datasets.

This pushes ML practitioners to:

- Understand data deeply

- Design efficient feature pipelines

- Balance accuracy with performance

It’s ML fundamentals applied under real constraints.

Continuous Learning (Carefully Applied)

Some edge systems support:

- Periodic model updates

- Federated learning

- Feedback loops

But continuous learning must be tightly controlled to avoid drift, instability, or security issues.

Real-World Use Cases of Edge AI

Smart Cameras and Vision Systems

Instead of streaming video to the cloud, cameras:

- Detect objects locally

- Trigger alerts

- Store only relevant clips

This reduces bandwidth and improves response time.

Industrial IoT and Manufacturing

It monitors:

- Equipment health

- Anomalies

- Safety conditions

Decisions happen on-site, preventing downtime and accidents.

Healthcare Devices

Medical devices use it to:

- Analyze signals locally

- Protect patient data

- Provide instant feedback

This supports privacy and reliability in critical environments.

Retail and Customer Experience

It powers:

- In-store analytics

- Dynamic pricing displays

- Inventory tracking

All without constant cloud dependency.

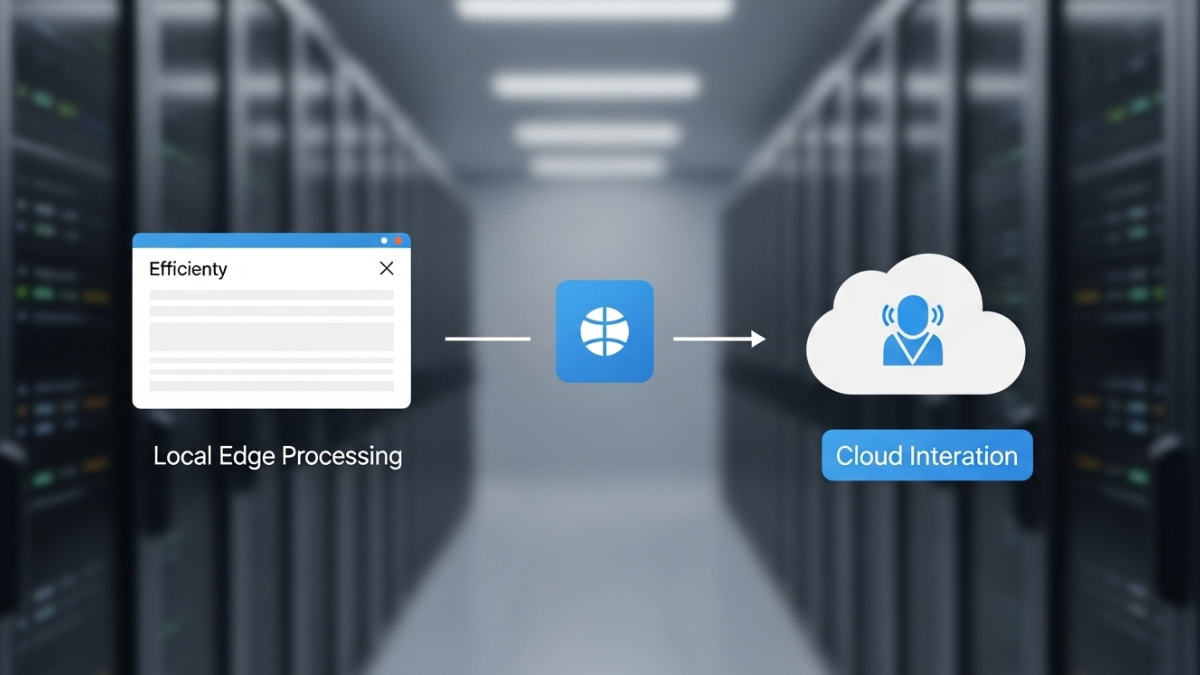

Edge AI vs Cloud AI: Not a Competition

A common mistake is framing Edge AI as “better than” cloud AI.

In reality:

- Cloud AI excels at training, aggregation, and global intelligence

- Edge AI excels at speed, privacy, and local decision-making

Modern ML architectures are hybrid by design.

The future belongs to systems that intelligently split work between edge and cloud.

Challenges of Edge AI (That Beginners Should Understand)

Deployment Complexity

Managing models across thousands of devices is hard:

- Versioning

- Updates

- Monitoring

This introduces operational challenges beyond pure ML.

Limited Observability

Debugging models at the edge is more difficult than in centralized systems.

Teams must invest in:

- Logging strategies

- Monitoring pipelines

- Robust testing

Security Risks

Edge devices can be:

- Physically accessed

- Tampered with

- Targeted by attacks

Security must be designed in from the start.

Why Edge AI Matters for ML Learners

For anyone learning machine learning today, Edge AI reinforces a critical lesson:

ML is not just about accuracy it’s about deployment reality.

Understanding Edge AI helps learners:

- Appreciate constraints

- Design efficient models

- Think system-wide, not model-only

It bridges the gap between theory and production.

The Bigger Trend: ML Moving Closer to Reality

Edge AI represents a broader shift in machine learning:

- From experimentation → execution

- From centralized → distributed systems

- From unlimited resources → constrained environments

This shift forces better engineering discipline and better ML fundamentals.

Final Thoughts: Edge AI Is ML Growing Up

Edge AI exists because the real world is messy, fast, and constrained. Running models where data lives is not a shortcut it’s a necessity.

For organizations, Edge AI unlocks:

- Faster decisions

- Lower costs

- Better privacy

- Greater resilience

For learners and practitioners, it’s a reminder that great machine learning works within reality, not above it.

Edge AI isn’t the future because it’s trendy.

It’s the future because it solves problems the cloud alone cannot.

If your organization or team is exploring machine learning deployment strategies including Edge AI and hybrid architectures technology consulting and ML advisory can help you design systems that scale in the real world. For more details Contact Us