The transition isn’t theoretical anymore for Script-Based Testing. It’s already underway, and the gap between teams that adopt AI-driven testing and those that cling to script-heavy frameworks is widening fast.

For years, automation testing has been synonymous with writing scripts structured, repeatable, and painfully fragile. Frameworks like Selenium became the backbone of QA automation. Entire teams, processes, and even careers were built around maintaining these systems.

But here’s the uncomfortable truth:

Script-based testing doesn’t scale in a modern software environment.

And AI is exposing that weakness.

The Core Problem: Script-Based Testing Was Never Built for Speed

Script-based testing was designed in an era where:

- Release cycles were slower

- Applications were less dynamic

- UI changes were less frequent

That world doesn’t exist anymore.

Today’s systems are:

- Continuously deployed

- Built on microservices

- Rapidly evolving at the UI and API layers

Trying to test this environment with static scripts is like trying to manage cloud infrastructure with manual server configs. It’s outdated thinking.

Where Script-Based Testing Breaks Down

1. Maintenance Becomes the Primary Cost Center

Every change in the UI triggers a cascade of broken tests.

- XPath changes → test failure

- CSS class updates → test failure

- Minor layout shifts → test failure

Your team ends up spending:

60–80% of time fixing tests instead of validating product quality.

That’s not testing. That’s firefighting.

2. Flaky Tests Destroy Trust in Automation

Flaky tests are the silent killer of QA systems.

They:

- Pass sometimes

- Fail randomly

- Create false positives

Eventually, developers stop trusting test results.

And when that happens, your automation suite becomes irrelevant.

3. Slow Feedback Loops Kill Velocity

Script-heavy frameworks take time to execute and debug.

In a CI/CD pipeline:

- Slow tests = delayed feedback

- Delayed feedback = slower releases

In a competitive environment, that’s unacceptable.

4. Talent Dependency Is Too High

Maintaining script-based systems requires engineers who:

- Understand programming deeply

- Know testing frameworks inside out

- Can debug complex failures quickly

That’s expensive and hard to scale.

AI-Driven Testing: What’s Actually Changing

Modern platforms like Testim, Mabl, and Functionize are not just improving testing they’re redefining it.

They shift testing from:

“Write and maintain scripts”

to

“Train and guide intelligent systems”

Key Capabilities of AI Testing Systems

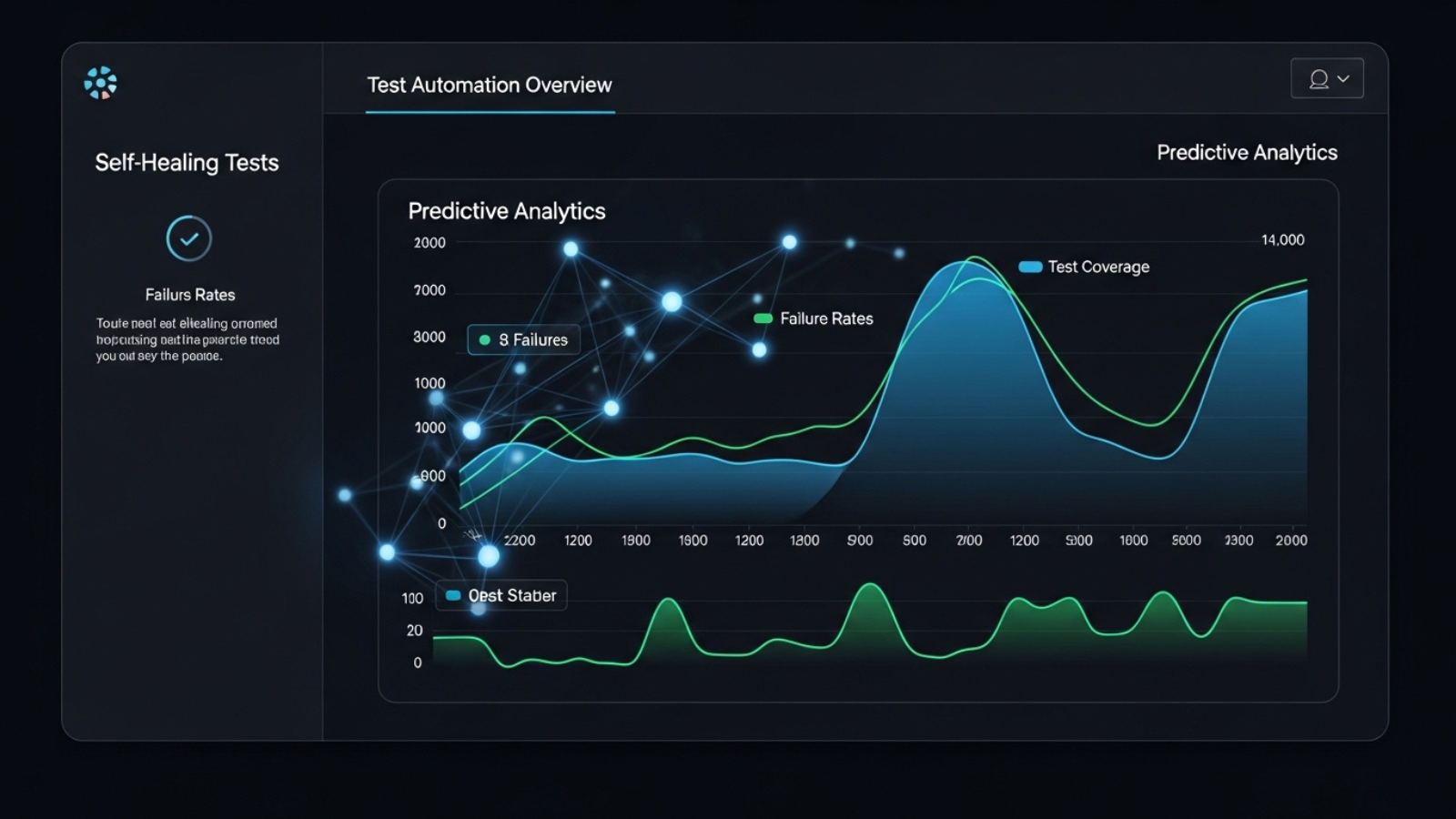

1. Self-Healing Test Execution

Traditional approach:

- Element changes → test breaks

AI approach:

- Model identifies similar elements

- Automatically adjusts selectors

- Test continues without manual fixes

This alone eliminates a massive chunk of maintenance overhead.

2. Intelligent Test Creation

Instead of manually writing test cases, AI can:

- Observe user sessions

- Map workflows

- Generate realistic test scenarios

This creates tests that reflect actual user behavior not assumptions.

3. Risk-Based Testing

Not all tests are equally important.

AI systems analyze:

- Code changes

- Historical failures

- User impact

Then prioritize tests accordingly.

This means faster pipelines without sacrificing quality.

4. Visual and Functional Validation

AI doesn’t just check if something “works.”

It can:

- Detect UI inconsistencies

- Identify layout shifts

- Compare visual states intelligently

This reduces the need for brittle visual assertion scripts.

5. Continuous Learning

The system improves over time by learning from:

- Failures

- Changes

- Usage patterns

Script-based systems degrade over time.

AI systems improve over time.

The Economics of AI vs Script-Based Testing

Let’s be blunt this shift is driven by economics.

Script-Based Testing:

- High upfront setup

- Continuous maintenance cost

- Increasing complexity over time

AI-Driven Testing:

- Higher tool cost initially

- Lower long-term maintenance

- Better scalability

If you’re running a business, the decision is obvious.

The Integration Layer: Where Most Teams Fail

Adopting AI tools without changing your workflow is a mistake.

AI testing must be embedded into your delivery pipeline using tools like:

- Jenkins

- GitHub Actions

Correct Integration Looks Like:

- Every commit triggers AI-based test execution

- Feedback loops are near real-time

- Failures are categorized intelligently

- Reports provide actionable insights

Incorrect Integration Looks Like:

- Running AI tests as a separate QA step

- Treating AI as a replacement for strategy

- Ignoring test data and environment control

That’s how teams waste money on “AI tools” without getting results.

The Cultural Shift: QA Teams Must Evolve

This is where most organizations struggle.

AI doesn’t just change tools it changes roles.

Old Role: Test Executor

- Writes scripts

- Runs tests

- Reports bugs

New Role: Quality Engineer

- Designs testing strategy

- Defines risk coverage

- Oversees AI systems

- Analyzes failure patterns

If your QA team doesn’t evolve, they become obsolete.

The Hard Truth Most Teams Ignore

AI testing is not optional anymore for high-growth teams.

But here’s the part nobody tells you:

Adopting AI without fixing your fundamentals will fail.

If your system has:

- Poor architecture

- Unstable environments

- No CI/CD discipline

- Undefined quality metrics

AI will amplify your chaos, not solve it.

Where Script-Based Testing Still Makes Sense

Let’s stay realistic.

Script-based testing is not completely dead.

It still works for:

- Highly controlled environments

- Simple applications

- Legacy systems where change is minimal

But for:

- SaaS platforms

- Scalable products

- Rapid-release environments

It’s a liability.

The Strategic Shift You Need to Make

If you want to stay competitive, your roadmap should look like this:

Phase 1: Audit Your Current Testing System

- Identify maintenance-heavy areas

- Measure flakiness

- Analyze execution time

Phase 2: Reduce UI Test Dependency

- Move logic testing to API layer

- Keep UI tests minimal and critical

Phase 3: Introduce AI Testing Tools

Start small:

- Pilot on high-impact workflows

- Measure improvement

Phase 4: Integrate Into CI/CD

Make AI testing part of your pipeline not an add-on.

Phase 5: Redefine QA Roles

Train your team to think like quality engineers, not script writers.

Final Reality Check

If your current setup looks like this:

- Heavy reliance on Selenium

- Large volumes of brittle UI tests

- Frequent test failures after minor updates

- QA operating as a separate function

You’re not just inefficient you’re exposed.

Bottom Line

AI is not “enhancing” automation testing.

It is replacing the core model of how script-based testing works.

The companies that understand this early will:

- Ship faster

- Reduce costs

- Build more reliable systems

The ones that don’t will:

- Spend more

- Move slower

- Lose competitive edge

The Only Question That Matters

Are you building a testing system that scales with your product…

or one that collapses as it grows?

For more Contact Us