AI search integration is transforming how content is discovered, summarized, and ranked in modern search engines. In 2026, search is no longer limited to keyword matching and blue links. Artificial intelligence now interprets intent, generates structured summaries, and reshapes how users interact with information online.

Instead of simply ranking pages, AI search systems analyze semantic relationships, contextual depth, and content structure before presenting answers directly within search interfaces. This shift is fundamentally changing content strategy and SEO practices.

This marks a major shift in content strategy. SEO is no longer only about visibility it is about participation in AI-driven discovery systems.

From Blue Links to Intelligent Answers

Traditional search results relied on ranking web pages as clickable blue links. Users would:

- Enter a query

- Browse results

- Click a page

- Extract information

Today, AI models summarize multiple sources and present direct answers within the search interface itself.

This transformation includes:

- AI-generated summaries

- Conversational search results

- Multi-step guided answers

- Follow-up question prompts

- Context-aware recommendations

Content is now competing not just for rankings, but for inclusion in AI-generated responses.

How AI Search Integration Changes SEO Strategy

AI-driven search systems evaluate content differently. Instead of scanning for keyword frequency alone, they prioritize:

- Conceptual depth

- Entity relationships

- Author credibility

- Structured clarity

- Context completeness

Content that is thin, repetitive, or surface-level is less likely to be surfaced in AI summaries.

In contrast, content that demonstrates clarity, expertise, and logical structure has higher chances of being referenced.

The Rise of Structured and Extractable Content

AI models rely heavily on structured data patterns. This means that content optimized for AI discovery typically includes:

- Clear H2 and H3 headings

- Bullet points

- Numbered steps

- FAQs

- Definitions and explanations

- Logical topic progression

Unstructured long paragraphs are harder for AI systems to parse and summarize accurately.

Content structure now directly influences discoverability.

Multimodal Discovery Is Expanding

Search is no longer purely text-based. AI integration supports:

- Image interpretation

- Video summarization

- Voice queries

- Conversational responses

- Cross-platform search experiences

Content creators must consider multiple formats when designing assets.

For example:

- A blog post may appear as a summarized snippet

- An infographic may be extracted into a featured answer

- A video transcript may inform conversational AI responses

Content discovery is now multi-layered.

The Impact on Click-Through Behavior

One of the most significant changes in AI-integrated search is its effect on traffic patterns.

Because AI answers often provide summaries directly in search results, users may not always click through to the original source.

This introduces new strategic questions:

- How do brands maintain visibility if clicks decrease?

- How should content provide value beyond summaries?

- What motivates users to visit the full page?

The answer lies in depth and differentiation.

Surface-level answers may be summarized, but original insights, case studies, frameworks, and expert analysis still drive engagement.

As AI search integration evolves, content must be structured for extractability and semantic clarity rather than keyword repetition.

Authority Signals Matter More Than Ever

AI systems prioritize trustworthy sources. Signals that influence AI inclusion include:

- Author expertise

- Brand authority

- Backlink credibility

- Consistent publishing

- Topical depth

Content ecosystems built around topic clusters perform better than isolated posts.

For example, rather than publishing a single article on SEO, organizations now build:

- Core pillar content

- Supporting subtopics

- Case studies

- Technical breakdowns

- Expert commentary

AI favors comprehensive topical authority.

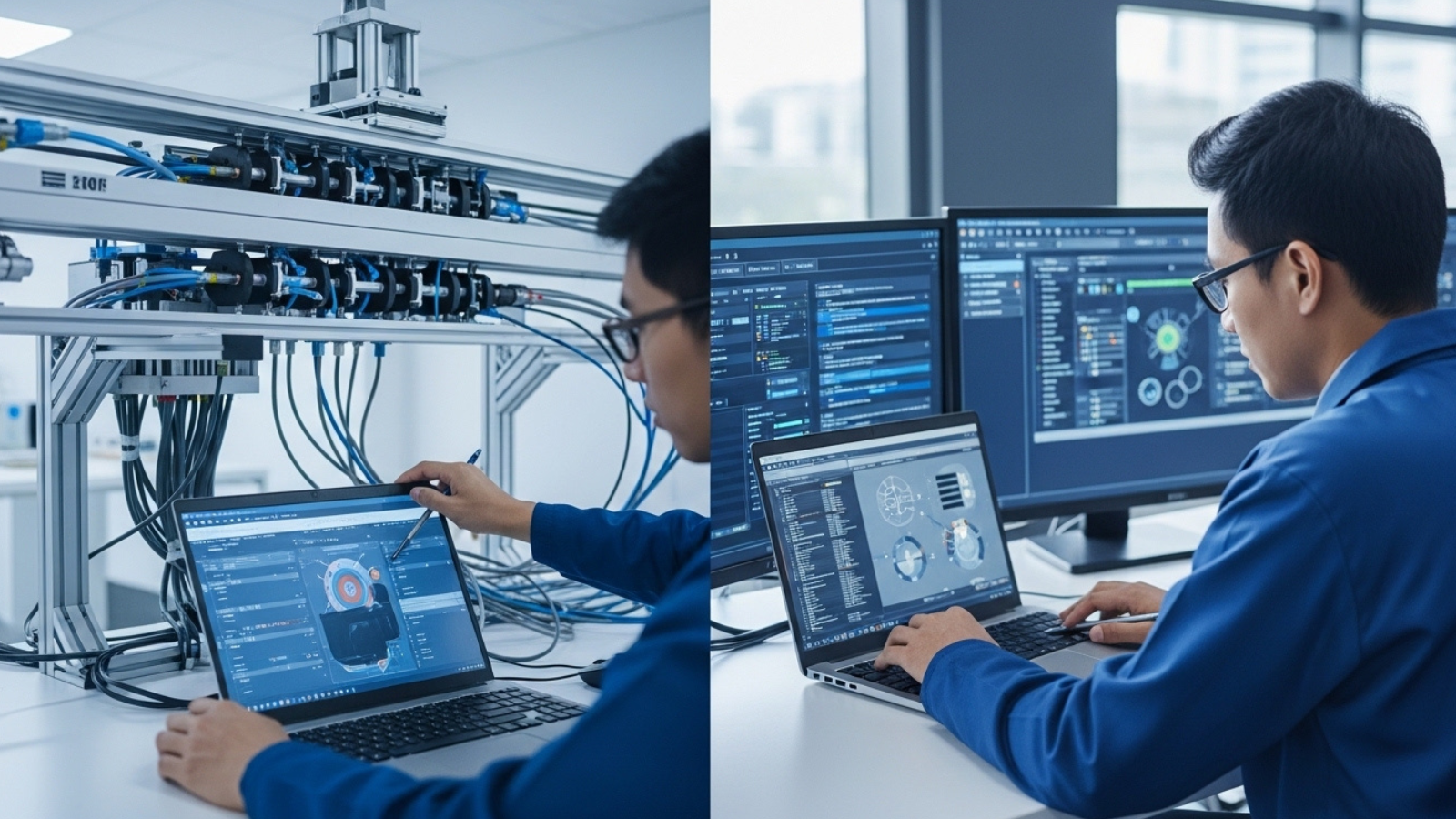

Organizations that understand AI search integration will outperform competitors still relying on traditional ranking tactics.

Topic Clusters Over Keywords

The integration of AI into search accelerates the shift from keyword-based SEO to intent-based SEO.

Instead of targeting individual search terms, successful strategies focus on:

- Topic coverage

- User journey alignment

- Related question mapping

- Contextual completeness

AI models connect ideas rather than matching isolated phrases.

Content strategy must reflect that evolution.

First-Party Engagement Signals Are Increasingly Important

With AI search integration reducing some click-through behavior, engagement quality becomes more critical.

Search engines now consider:

- Time on page

- Scroll depth

- Repeat visits

- Content interaction

- Bounce rate

User satisfaction signals influence long-term ranking and visibility in AI systems.

SEO now overlaps more closely with UX and content experience design.

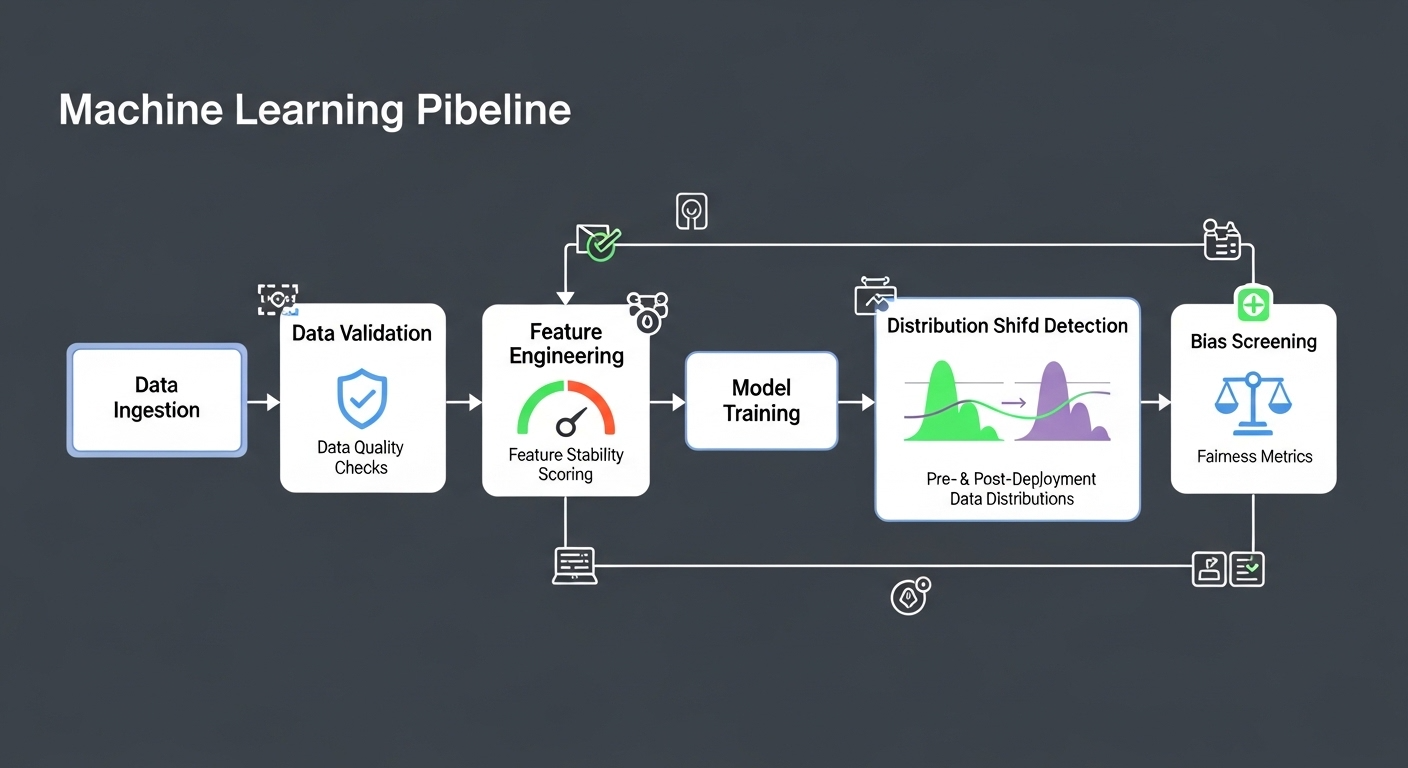

The Role of AI in Content Creation

AI is not only transforming search it is also influencing content production.

Content teams now use AI tools for:

- Topic ideation

- Outline structuring

- Keyword clustering

- Content optimization suggestions

- Performance forecasting

However, AI-generated content alone is insufficient.

AI integration in search systems favors originality, expertise, and differentiated insight not generic summaries.

Human-driven strategic thinking remains essential.

Challenges of AI Search Integration

Despite its benefits, AI-driven search introduces challenges:

1. Reduced Traffic Transparency

Summarized results may obscure referral patterns.

2. Attribution Complexity

AI-generated answers may aggregate multiple sources without clear credit.

3. Increased Competition for Authority

Brands must compete not only for ranking but for inclusion in summary models.

Organizations must adapt measurement frameworks to account for new discovery dynamics.

Strategic Recommendations for 2026

To succeed in AI-integrated search environments, organizations should:

- Build topic clusters, not isolated articles

- Structure content clearly for extractability

- Demonstrate expertise through case studies and data

- Use schema markup where appropriate

- Optimize for user intent rather than keyword density

- Focus on engagement depth beyond surface answers

The goal is not just ranking it is inclusion, authority, and sustained trust.

Conclusion

AI integration is reshaping content discovery at a structural level. Search engines are evolving from index-and-rank systems into interpret-and-answer systems.

This shift changes how content is evaluated, displayed, and consumed. Visibility now depends on semantic depth, structural clarity, and authority signals.

Organizations that adapt to AI-driven discovery models will maintain influence in the evolving search landscape. Those that rely solely on traditional SEO tactics risk declining visibility.

In the age of AI Search Integration, content strategy must be intelligent, structured, and authoritative.

Search is no longer about links. It is about understanding.

AI search integration is not a temporary shift it represents a permanent transformation in how digital content is evaluated and delivered.

For more Contact Us