The world of software testing is undergoing one of the biggest transformations in its history. For years, automation testing focused on writing scripts, executing predefined flows, and validating expected outcomes. While this approach helped organizations accelerate software delivery, it also created major operational challenges such as flaky tests, script maintenance, framework instability, and scalability issues.

Now, a completely new era is emerging.

Agentic AI Testing

This next-generation approach is rapidly becoming one of the most important innovations in quality assurance and software engineering.

Unlike traditional automation systems that simply follow instructions, agentic AI systems are designed to think, adapt, learn, and make testing decisions autonomously.

These intelligent testing agents can:

- understand applications,

- analyze workflows,

- adapt to UI changes,

- generate test scenarios,

- identify risks,

- repair failed scripts,

- and continuously improve testing processes without constant human involvement.

As businesses push for faster release cycles and more reliable software, agentic AI testing is becoming a major competitive advantage across the technology industry.

The Evolution of Software Testing

To understand why agentic AI testing matters so much, it is important to understand how software testing evolved over time.

Phase 1 Manual Testing Era

In the early days of software development, testing was completely manual.

QA engineers would:

- click through applications,

- verify workflows,

- document bugs,

- and repeat regression tests manually.

Although this method worked for smaller applications, it became extremely inefficient as software systems grew more complex.

Major problems included:

- slow execution,

- human error,

- limited scalability,

- repetitive workloads,

- and delayed releases.

As companies started releasing software more frequently, manual testing alone became unsustainable.

Phase 2 Automation Testing Revolution

To solve scalability problems, organizations adopted automation frameworks such as:

- Selenium

- Cypress

- Appium

- TestComplete

- UFT

- Robot Framework

Automation testing allowed teams to:

- execute tests faster,

- improve regression coverage,

- reduce repetitive manual work,

- and integrate testing into CI/CD pipelines.

This was a major improvement for the software industry.

However, automation also introduced new challenges.

The Hidden Problems of Traditional Automation

Although automation improved speed, it created operational burdens that many companies still struggle with today.

1. Endless Script Maintenance

Traditional automation frameworks depend heavily on:

- element locators,

- fixed workflows,

- hardcoded logic,

- and predefined assertions.

Even small UI changes can break entire test suites.

For example:

- changing a button ID,

- moving a menu,

- updating a form field,

- or redesigning a page

can instantly cause automation failures.

QA teams often spend more time fixing tests than testing applications.

This creates massive maintenance overhead.

2. Flaky Tests Are Destroying Productivity

One of the biggest frustrations in QA today is flaky testing.

A flaky test:

- passes sometimes,

- fails randomly,

- behaves inconsistently,

- and creates false alarms.

This damages trust in the automation pipeline.

Developers begin ignoring failures because they assume the test itself is unstable.

Eventually:

- deployment slows,

- debugging increases,

- and software quality declines.

3. Automation Cannot Truly Think

Traditional scripts follow fixed instructions.

They do not:

- understand business logic,

- interpret user behavior,

- or adapt dynamically.

If the expected path changes, the test usually crashes.

This makes automation rigid and fragile.

4. Modern Applications Became Too Complex

Today’s software ecosystems are far more advanced than they were ten years ago.

Modern platforms include:

- cloud infrastructure,

- APIs,

- AI integrations,

- microservices,

- mobile apps,

- multi-browser environments,

- real-time systems,

- and distributed architectures.

Maintaining large automation suites across these systems is becoming increasingly difficult.

Why Agentic AI Testing Emerged

The software industry needed something more intelligent than traditional automation.

Companies wanted systems capable of:

- adapting automatically,

- learning continuously,

- reducing maintenance,

- and improving testing efficiency.

This demand gave rise to Agentic AI Testing.

What Exactly Is Agentic AI Testing?

Agentic AI testing refers to intelligent autonomous testing systems powered by advanced artificial intelligence.

These systems behave more like human QA engineers than scripted bots.

Instead of following static instructions, AI agents can:

- observe applications,

- understand workflows,

- analyze context,

- make decisions,

- and adapt dynamically.

This fundamentally changes how testing is performed.

Traditional Automation vs Agentic AI Testing

Traditional Automation

Traditional automation:

- follows fixed rules,

- executes scripted flows,

- requires constant maintenance,

- breaks easily,

- and lacks adaptability.

Agentic AI Testing

Agentic systems:

- learn from behavior,

- adapt to changes,

- self-heal broken tests,

- generate new test scenarios,

- optimize execution,

- and improve continuously.

The difference is enormous.

It is similar to comparing:

- a calculator

with - an intelligent assistant.

Core Technologies Behind Agentic AI Testing

Several advanced technologies power modern agentic systems.

1. Large Language Models (LLMs)

Modern AI agents leverage LLMs to:

- interpret natural language,

- understand workflows,

- generate testing logic,

- and reason about application behavior.

This enables users to create tests using plain English instructions.

Example:

“Verify that a customer can complete checkout using a credit card.”

The AI translates this into executable automated tests.

2. Machine Learning

Machine learning allows testing systems to:

- analyze historical failures,

- detect patterns,

- predict risk areas,

- and optimize test execution.

Over time, the system becomes smarter.

3. Computer Vision

AI-powered visual testing systems use computer vision to:

- identify UI elements,

- detect layout changes,

- validate visual consistency,

- and adapt to interface redesigns.

This reduces dependency on fragile locators.

4. Behavioral Analytics

Agentic systems study:

- user journeys,

- application workflows,

- and interaction patterns

to identify high-risk testing areas automatically.

Key Features of Agentic AI Testing

Self-Healing Automation

Self-healing is one of the most powerful capabilities of intelligent testing systems.

When applications change:

- UI structure,

- button positions,

- workflows,

- or element attributes,

the AI automatically identifies alternative paths.

This dramatically reduces script failures.

Autonomous Test Generation

Traditional testing requires manual script creation.

Agentic AI can automatically:

- generate test cases,

- identify edge cases,

- explore workflows,

- and expand test coverage.

This saves enormous time for QA teams.

Natural Language Test Creation

One of the biggest breakthroughs is the ability to create tests using conversational language.

This makes automation more accessible to:

- business analysts,

- product managers,

- non-technical stakeholders,

- and manual testers.

The barrier to automation becomes significantly lower.

Intelligent Prioritization

AI systems can identify:

- high-risk modules,

- unstable workflows,

- frequently failing areas,

- and critical business paths.

This helps teams focus testing efforts strategically.

Predictive Defect Analysis

Advanced AI agents can predict:

- where bugs are likely to occur,

- which releases carry higher risk,

- and which components require deeper validation.

This shifts QA from reactive testing toward proactive quality engineering.

Autonomous Exploratory Testing

Traditional exploratory testing depends heavily on human creativity.

Agentic systems can autonomously:

- navigate applications,

- try unexpected paths,

- perform random interactions,

- and discover hidden issues.

This significantly improves test coverage.

Real-World Applications of Agentic AI Testing

Financial Services

Banks and fintech companies use AI testing for:

- payment validation,

- transaction reliability,

- fraud prevention systems,

- security workflows,

- and compliance testing.

Because financial systems require extremely high accuracy, intelligent testing systems provide major operational benefits.

Healthcare Platforms

Healthcare applications require:

- stability,

- security,

- and regulatory compliance.

AI-powered testing helps validate:

- patient systems,

- appointment workflows,

- medical records,

- and healthcare portals.

E-Commerce Platforms

E-commerce companies use agentic testing to monitor:

- shopping carts,

- payment gateways,

- inventory systems,

- recommendation engines,

- and customer journeys.

This ensures smooth user experiences during high-traffic periods.

SaaS Companies

Software-as-a-Service businesses benefit greatly from:

- continuous testing,

- rapid deployment validation,

- multi-browser compatibility,

- and scalable regression coverage.

Agentic systems support faster software delivery cycles.

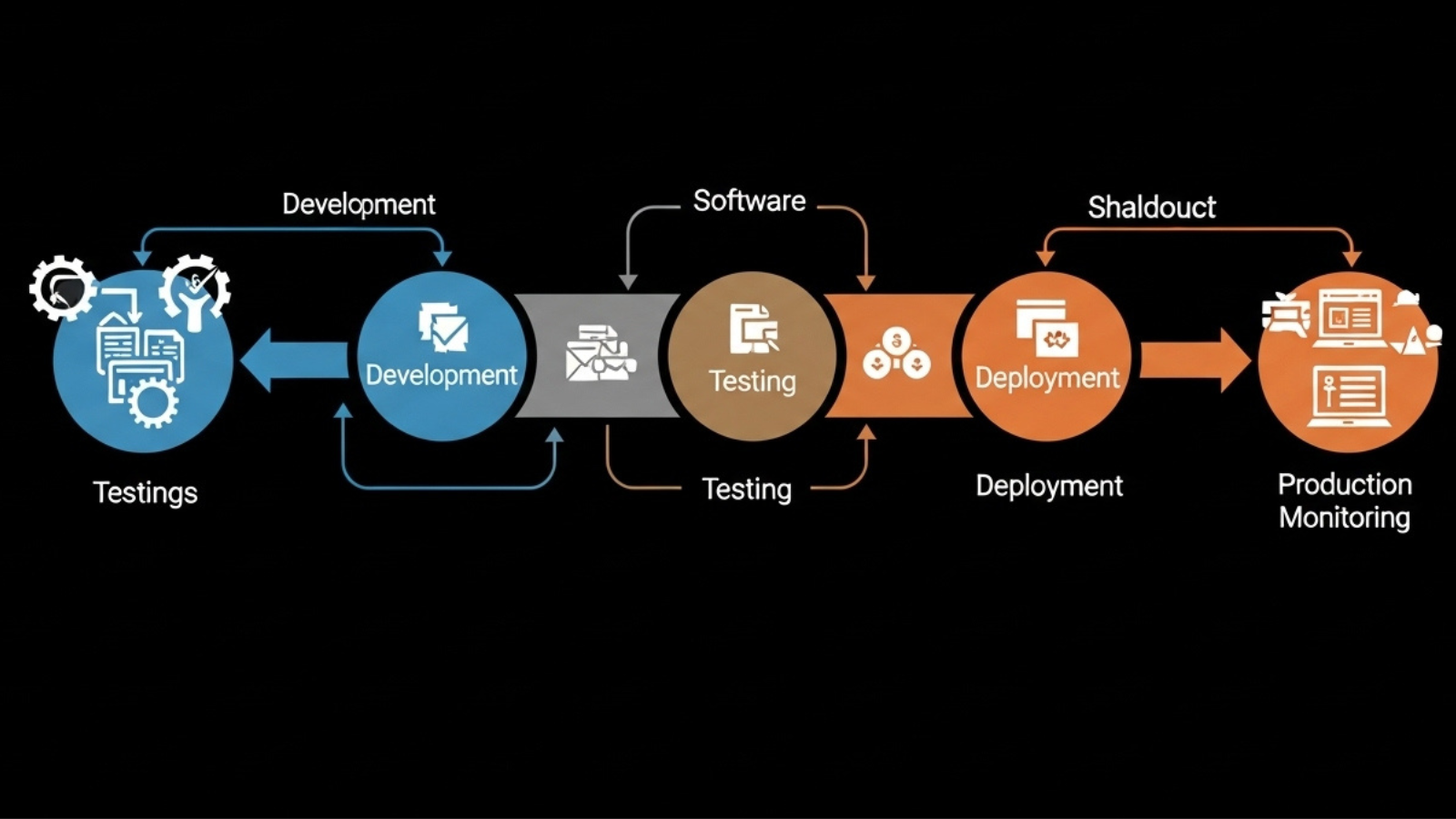

Impact on DevOps and CI/CD

Modern software delivery relies heavily on:

- DevOps,

- CI/CD pipelines,

- and rapid deployment strategies.

Traditional automation often becomes a bottleneck because:

- maintenance slows pipelines,

- flaky tests delay releases,

- and debugging consumes engineering time.

Agentic AI testing improves CI/CD reliability by:

- reducing instability,

- adapting automatically,

- and accelerating validation processes.

This enables organizations to release software faster with greater confidence.

How QA Roles Are Changing

Many people fear AI will replace QA engineers entirely.

That is not what is happening.

Instead, QA roles are evolving.

The Shift From Testers to Quality Engineers

Future QA professionals will focus more on:

- testing strategy,

- risk analysis,

- quality architecture,

- AI supervision,

- and intelligent automation management.

Routine scripting tasks will increasingly be handled by AI agents.

Skills QA Professionals Need in 2026

To remain competitive, testers now need modern skills such as:

- Playwright,

- API testing,

- AI-assisted automation,

- cloud testing,

- CI/CD integration,

- observability tools,

- prompt engineering,

- and intelligent QA systems.

The industry is moving toward:

Quality Intelligence Engineering

rather than traditional script-based testing.

Major Benefits of Agentic AI Testing

Faster Software Releases

AI systems reduce testing bottlenecks dramatically.

Organizations can:

- deploy faster,

- validate releases quickly,

- and accelerate innovation cycles.

Reduced Operational Costs

Self-healing automation reduces maintenance expenses significantly.

Companies save:

- engineering time,

- QA effort,

- and debugging costs.

Better Software Reliability

Intelligent testing systems improve:

- bug detection,

- regression stability,

- and production reliability.

Improved Scalability

AI-powered systems scale better across:

- browsers,

- devices,

- cloud environments,

- APIs,

- and distributed systems.

Increased Test Coverage

AI agents explore application paths humans often overlook.

This improves overall software quality.

Challenges of Agentic AI Testing

Despite its advantages, the technology still faces several challenges.

Trust and Transparency

Many organizations still hesitate to trust fully autonomous systems.

Teams want:

- explainability,

- validation,

- and oversight.

Human supervision remains important.

Data Privacy Concerns

AI systems often require access to:

- application workflows,

- customer behavior,

- and testing data.

This creates compliance and security concerns.

Integration Complexity

Integrating AI testing into legacy environments can be difficult.

Older systems may require major modernization efforts.

Skill Gaps

Many QA teams are not yet trained for:

- AI-assisted workflows,

- intelligent automation,

- or advanced quality engineering.

Upskilling is becoming essential.

The Future of Autonomous Testing

The future of software testing is moving toward fully intelligent ecosystems.

In the coming years, AI agents may:

- generate tests automatically,

- monitor production continuously,

- detect anomalies in real time,

- predict failures before deployment,

- and optimize quality strategies autonomously.

Testing will become:

- faster,

- smarter,

- more adaptive,

- and deeply integrated into software engineering itself.

Why Businesses Are Investing Heavily in Agentic AI

Organizations today prioritize:

- speed,

- scalability,

- reliability,

- and customer experience.

Agentic AI testing directly supports all these goals.

Businesses adopting intelligent QA systems gain:

- faster release cycles,

- improved software stability,

- reduced QA costs,

- better operational efficiency,

- and stronger competitive advantages.

This is why investment in AI-driven testing platforms is growing rapidly worldwide.

Final Thoughts

Agentic AI testing represents one of the most important transformations in the software industry.

The world is moving beyond static automation scripts and rigid frameworks.

The future belongs to:

- intelligent testing systems,

- adaptive QA ecosystems,

- and autonomous software quality engineering.

Organizations that adopt these technologies early will gain enormous advantages in:

- speed,

- innovation,

- scalability,

- and software reliability.

Meanwhile, QA professionals who learn AI-assisted testing skills will become highly valuable in the evolving technology landscape.

The testing industry is no longer just about executing scripts.

It is becoming an ecosystem of intelligent autonomous agents capable of continuously improving software quality at scale.

The age of traditional automation is slowly fading.

The age of Agentic AI Testing has already begun.

For more Contact Us