For many years, quality engineering operated behind the scenes. Teams focused on reducing defects, increasing automation coverage, improving regression efficiency, and maintaining release stability. These metrics were critical to engineering teams but rarely made their way to boardrooms. Quality engineering metrics are becoming central to business strategy as organizations connect software performance directly to financial outcomes.

That separation no longer exists.

In 2026, quality engineering metrics are being integrated directly into business KPIs. Executives now understand that software quality influences revenue performance, customer retention, operational risk, brand reputation, and competitive advantage.

Quality is no longer a technical report. It is a strategic business indicator.

The Evolution of Quality Engineering

Phase 1: Bug Detection

Quality teams were primarily responsible for finding defects before release.

Phase 2: Automation and Efficiency

Organizations invested in automation to accelerate release cycles and reduce manual effort.

Phase 3: Continuous Delivery Integration

Quality shifted left and right, embedding testing into CI/CD pipelines and production monitoring.

Phase 4: Business Alignment (Current Phase)

Quality metrics now correlate directly with financial and operational KPIs.

This evolution reflects the reality that digital products are no longer support functions they are primary revenue engines.

How Quality Engineering Metrics Drive Business Performance

1. Software Is Revenue Infrastructure

In retail, e-commerce platforms drive transactions.

In fintech, apps process financial activity.

In SaaS, uptime determines subscription retention.

A defect in production is no longer an inconvenience it is a financial event.

Executives now ask:

- How much revenue is at risk due to quality gaps?

- What is the cost per hour of downtime?

- How do defects affect customer lifetime value?

Quality engineering must answer these questions with measurable data.

2. Customer Experience Defines Brand Value

Customers no longer differentiate between technical failures and brand failures. A broken feature or slow-loading page directly impacts perception.

Quality metrics now include:

- User journey stability

- Page load performance

- Conversion impact after release

- Feature adoption consistency

These are business metrics disguised as quality signals.

3. Digital Risk Is Board-Level Risk

Cyber incidents, outages, and performance failures are now governance concerns. Boards expect transparency into:

- Change failure rate

- Incident frequency

- Recovery time

- Release risk level

Quality engineering has become a risk management function.

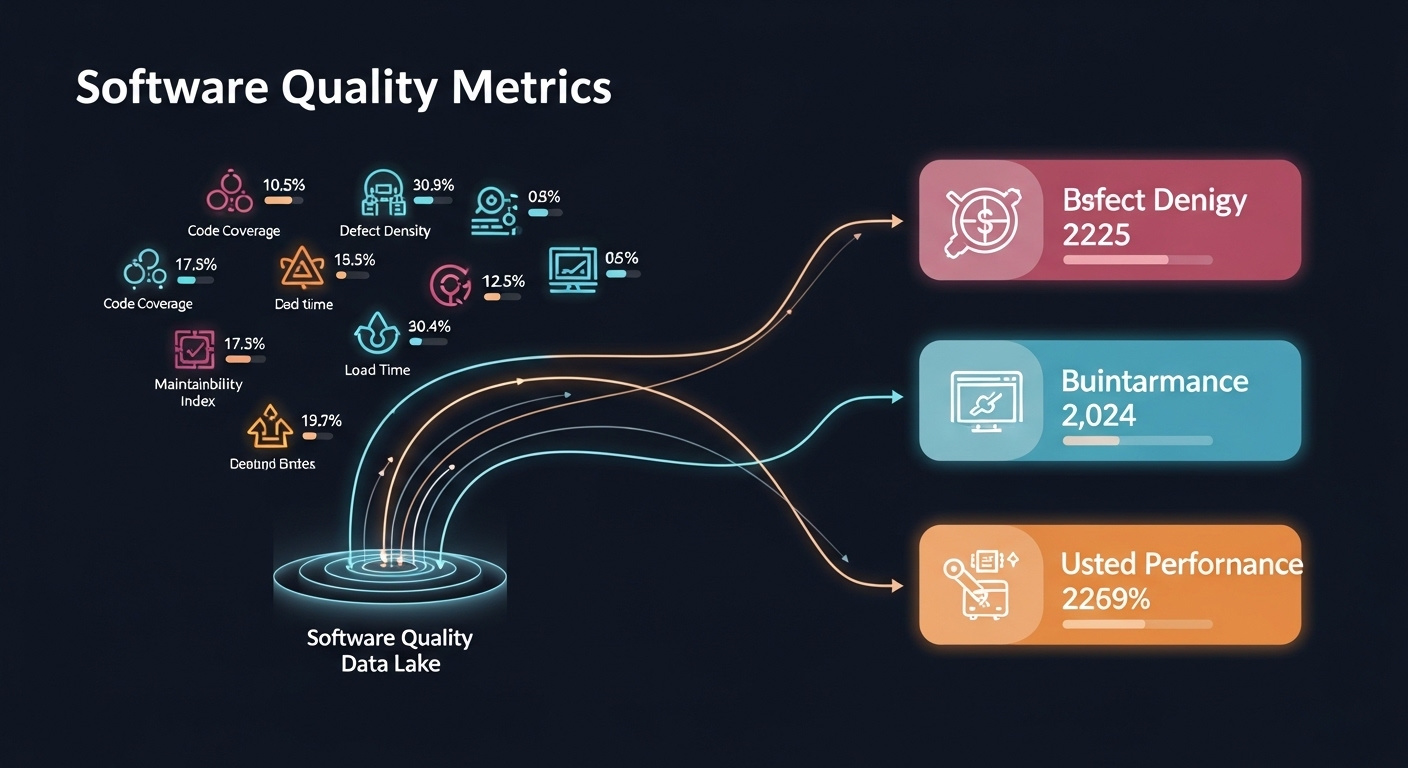

Mapping Quality Metrics to Business KPIs

To align quality with business strategy, organizations are redefining traditional metrics.

1. Defect Escape Rate → Revenue Risk Index

Rather than simply reporting escaped bugs, teams now calculate:

- Revenue lost per incident

- Conversion drop during outage

- Refund and compensation impact

- Customer churn associated with defects

Quality data feeds financial forecasting models.

2. Change Failure Rate → Operational Stability KPI

Frequent rollback events reduce trust and slow innovation. Organizations measure:

- Percentage of deployments causing incidents

- Cost of remediation

- Delays in feature rollout

This aligns DevOps metrics with executive performance dashboards.

3. Mean Time to Detect (MTTD) & Mean Time to Recover (MTTR) → Customer Retention Signal

Faster detection reduces impact. Faster recovery protects loyalty.

Companies now track:

- Minutes of user impact

- Retention drop during incidents

- Support ticket volume spikes

Quality metrics become leading indicators of churn.

4. Automation Coverage → Cost Optimization Metric

Automation is reframed from coverage percentage to financial outcome:

- Manual hours saved

- Release cycle acceleration

- Cost per deployment reduction

Automation investments are evaluated through ROI lenses.

The Role of Observability in Business-Driven Quality

Observability tools bridge the gap between technical signals and business outcomes.

Modern systems connect:

- Error rates → Transaction failures

- API latency → Abandoned sessions

- Infrastructure instability → SLA penalties

- Performance degradation → Revenue decline

This correlation transforms testing into real-time performance assurance.

Shift-right practices including canary releases, chaos engineering, and production validation enhance business alignment.

Modern enterprises now treat quality engineering metrics as leading indicators of revenue protection and customer trust.

Executive Dashboards: The New Quality Framework

Today’s leadership dashboards often include:

- Revenue at risk due to current defects

- Digital stability score

- Release confidence index

- SLA compliance percentage

- Business impact of incidents

- Customer sentiment after release

Quality now appears in quarterly business reviews and strategic planning sessions.

Cultural Transformation in Engineering Teams

Aligning quality metrics with business KPIs changes engineering culture.

From “Did Tests Pass?”

To:

“Did This Release Protect Revenue and Customer Trust?”

Engineers become outcome-focused rather than output-focused.

Quality teams collaborate more closely with:

- Product management

- Finance teams

- Customer success teams

- Risk management teams

Quality becomes cross-functional.

Challenges in Integrating Quality and Business Metrics

Despite the benefits, integration presents obstacles.

1. Data Integration Complexity

Correlating engineering data with financial systems requires unified analytics platforms.

2. Metric Overload

Too many metrics can dilute focus. Strategic prioritization is essential.

3. Cultural Resistance

Some engineering teams resist outcome-based evaluation. Leadership alignment is necessary.

Successful implementation requires both technological capability and cultural maturity.

The Strategic Advantage of Business-Aligned Quality

Organizations that integrate quality metrics into business KPIs gain:

- Clear visibility into digital risk exposure

- Faster decision-making during incidents

- Improved release confidence

- Stronger investor confidence

- More accurate revenue forecasting

Quality becomes predictive rather than reactive.

The Future of Quality Engineering

Looking ahead, we can expect:

- AI-driven predictive defect models

- Automated risk scoring for releases

- Real-time quality-health indicators tied to business dashboards

- Continuous validation integrated with product analytics

Quality engineering will increasingly function as a strategic intelligence layer within digital enterprises.

Conclusion

Quality engineering metrics are no longer confined to internal engineering reports. They are now central to business strategy, revenue protection, and customer trust.

By integrating quality signals into business KPIs, organizations move from defect detection to value preservation. They align technical excellence with financial performance and customer experience.

In today’s digital economy, quality is not just about preventing bugs. It is about safeguarding growth, stability, and competitive advantage.

Quality engineering is now a business discipline. As digital transformation accelerates, quality engineering metrics will continue shaping executive decision-making and long-term growth strategy.

For more information Connect with us