For years, threat modeling was treated as a separate security exercise typically conducted at the beginning of a project or during compliance reviews. Functional testing, on the other hand, focused purely on validating whether a system behaved as expected.

In 2026, that separation is disappearing.

Threat modeling is increasingly being embedded directly into functional test suites, transforming security from a periodic checkpoint into a continuous validation mechanism. This shift reflects a broader change in how organizations approach software quality, risk management, and digital resilience.

The Traditional Gap Between Testing and Security

Historically, functional testing answered one primary question:

Does the system work as intended?

Security testing, meanwhile, asked:

Can the system be exploited?

Because these efforts were handled by separate teams and tools, critical vulnerabilities often emerged late in the development lifecycle. Threat modeling sessions were conducted as documentation exercises rather than operational safeguards.

This siloed model no longer works in environments defined by:

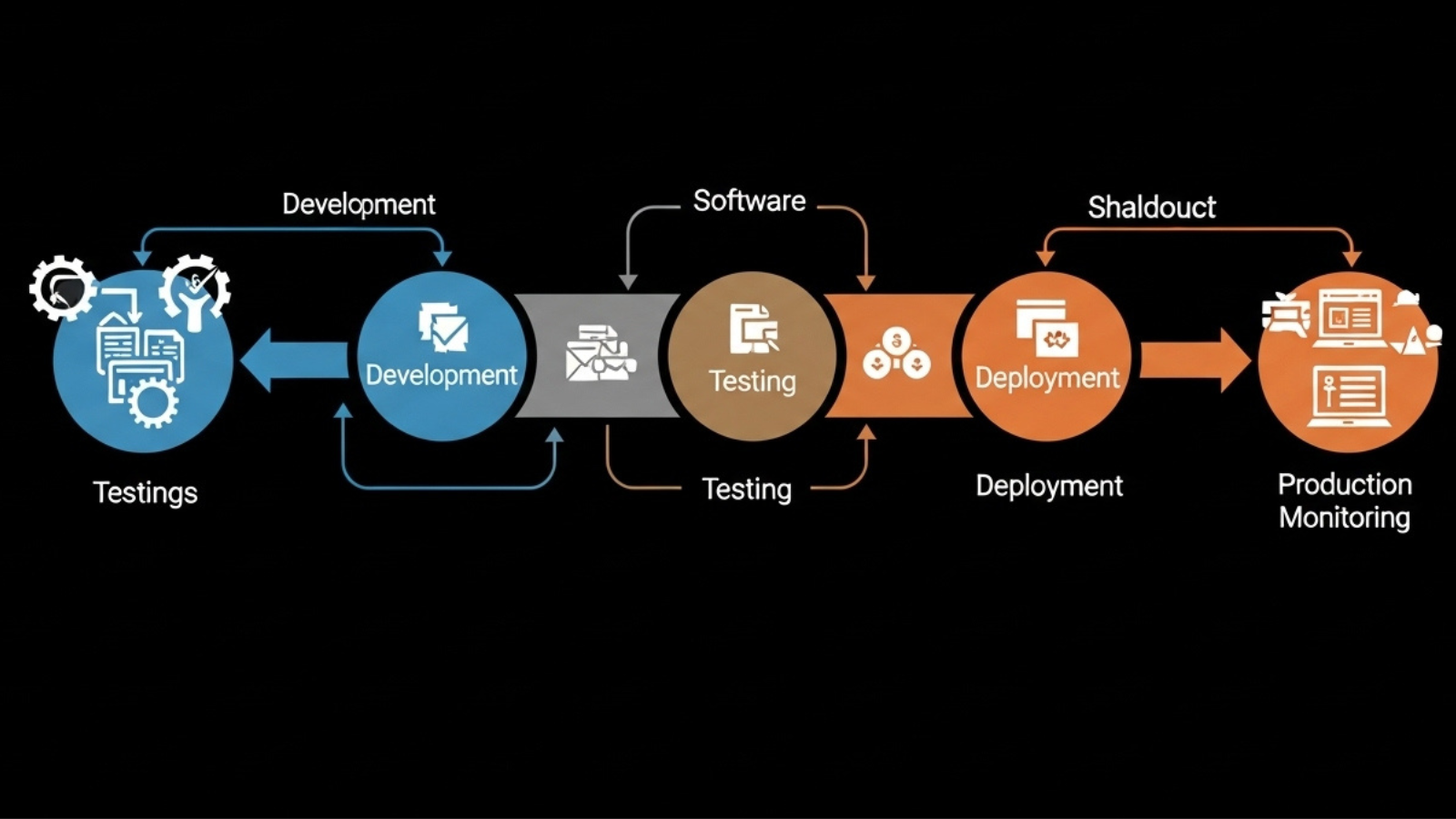

- Continuous integration and deployment

- Cloud-native infrastructure

- API-driven architectures

- Rapid feature releases

Security risks evolve at the same speed as application code.

What It Means to Integrate Threat Modeling Into Functional Testing

When threat modeling becomes part of functional test suites, it changes how requirements are written, how tests are designed, and how systems are validated.

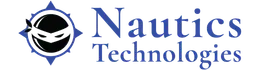

Instead of testing only for expected behavior, teams also test for:

- Misuse scenarios

- Privilege escalation attempts

- Data exposure risks

- Authentication bypass conditions

- Rate-limiting failures

Threat scenarios are translated into executable test cases.

This integration ensures that every functional validation cycle also verifies that security assumptions hold true.

Why This Shift Is Happening Now

Several factors are driving this transformation:

DevSecOps Maturity

Organizations have adopted DevSecOps practices, embedding security tools directly into CI/CD pipelines. As security becomes automated, it naturally aligns with automated testing frameworks.

API and Microservices Architecture

Modern systems expose numerous endpoints. Traditional perimeter security is insufficient. Threat modeling must evaluate how each service behaves under malicious conditions.

Rising Cost of Breaches

Data breaches, ransomware incidents, and compliance violations have demonstrated that reactive security is expensive. Prevention requires earlier detection of flawed logic.

Regulatory Pressure

Industries with strict compliance requirements now demand evidence of proactive risk identification. Integrated threat modeling supports auditability.

How Threat Modeling Enhances Functional Test Coverage

Embedding threat modeling improves test quality in multiple ways:

- Functional tests simulate malicious input patterns

- Authorization boundaries are validated automatically

- Data flow paths are verified for exposure risks

- Error handling is tested for information leakage

Testing evolves from confirming success cases to validating resilience.

In practice, this means:

- Adding negative test cases

- Simulating abnormal system states

- Stress testing authentication workflows

- Validating encryption enforcement

Security becomes measurable within quality metrics.

Related Articles: Why API-First Automation Is Transforming UI-Heavy Testing in 2026

From Static Diagrams to Dynamic Validation

Traditional threat modeling often relied on architectural diagrams and static analysis sessions. While valuable, these methods lacked continuous validation.

Modern integration converts threat models into:

- Automated security assertions

- Pipeline-based validation scripts

- Continuous compliance checks

- Runtime behavior monitoring triggers

Threat intelligence feeds can even update test logic dynamically.

This shift moves threat modeling from theoretical risk discussion to executable security enforcement.

Organizational Impact of Integrated Threat Modeling

When threat modeling becomes part of functional testing, organizational dynamics change.

Development Teams

Developers become more aware of potential abuse cases and design with defensive patterns.

QA Teams

Quality assurance expands scope beyond correctness to include resilience testing.

Security Teams

Security professionals collaborate earlier and continuously rather than acting as late-stage gatekeepers.

This collaborative approach reduces friction and shortens remediation cycles.

Benefits of Integrating Threat Modeling Into Functional Test Suites

Organizations that adopt this model experience:

- Earlier detection of logical vulnerabilities

- Reduced false positives from standalone security scans

- Improved compliance documentation

- Faster release cycles with lower risk

- Greater confidence in production stability

Security becomes an inherent characteristic of the system rather than an external overlay.

Challenges to Consider

Despite its advantages, integration requires:

- Skilled cross-functional collaboration

- Updated test automation frameworks

- Clear threat modeling methodologies

- Ongoing maintenance of threat scenarios

However, the long-term reduction in breach risk outweighs the initial implementation effort.

The Future of Security Testing

Looking forward, threat modeling will likely integrate with:

- AI-driven anomaly detection

- Behavior-based risk scoring

- Continuous runtime validation

- Automated exploit simulation

Functional test suites will not only verify that systems work they will verify that systems resist exploitation.

Security testing and functional testing will become inseparable components of quality engineering.

Conclusion

Threat modeling is no longer a standalone documentation task. It is becoming a practical, automated, and measurable part of functional test suites.

As digital systems grow more interconnected and complex, security cannot remain a separate phase. It must be validated continuously, alongside performance and reliability.

Organizations that integrate threat modeling into functional testing frameworks build more resilient software, reduce risk exposure, and strengthen long-term digital trust.

In modern software engineering, functionality without security is incomplete. Integrated threat validation is the new standard.

For more Details let’s connect on Contact Us