Introduction: The Hiring Mindset Has Fundamentally Changed

For years, hiring was treated as a growth signal. More people meant more momentum, more credibility, and more capacity. Headcount became a proxy for success.

In 2026, that mindset is gone.

Companies are still hiring but in a very different way. The focus has shifted from how many people we employ to what actually gets executed. Roles are approved not because teams are stretched, but because specific outcomes cannot be delivered without them.

This is not a temporary slowdown. It’s a structural change in how organizations grow.

The End of Headcount Driven Growth

The Traditional model was linear:

- Work increases → hire more people

- Complexity increases → add managers

- Coordination slows → add processes

Over time, this led to:

- Bloated teams

- Rising costs without proportional output

- Slower decision-making

- Accountability dilution

Leadership teams have learned often the hard way that headcount growth does not guarantee execution capacity.

In fact, it often reduces it.

Execution Is Now the Scarce Resource

In 2026, most organizations don’t lack ideas, roadmaps, or strategies. They lack execution bandwidth.

Execution means:

- Shipping working systems

- Closing deals

- Automating processes

- Reducing operational friction

- Delivering measurable outcomes

Hiring is now justified only when it clearly improves one of these.

If a role cannot be tied to execution, it doesn’t get approved.

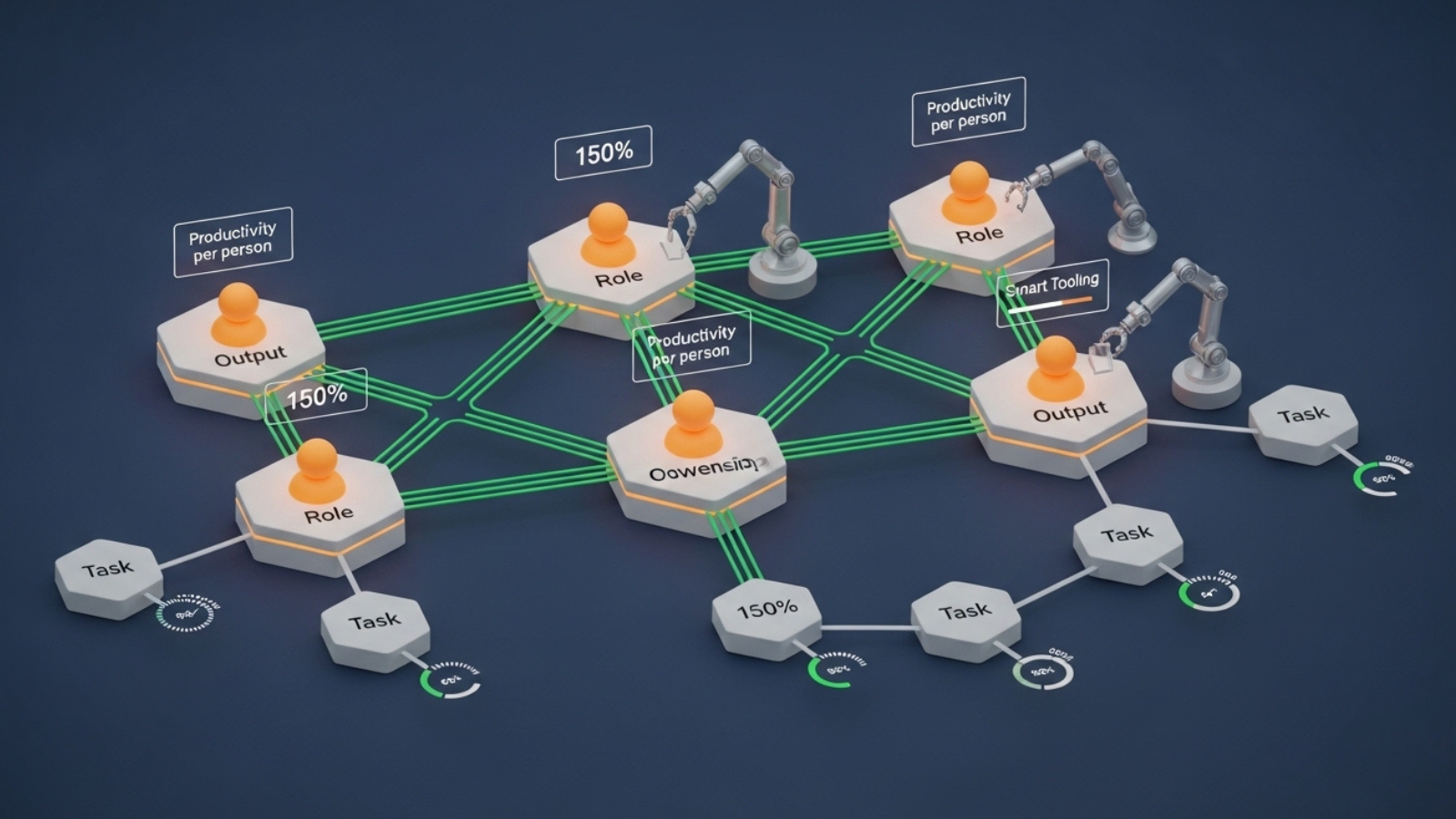

AI Accelerated This Shift

AI didn’t eliminate jobs but it redefined leverage.

Tasks that once required entire teams can now be handled by:

- Smaller, AI-augmented groups

- Automated workflows

- Integrated systems

This has changed the hiring question from:

“Do we need more people?”

to

“Can we execute this better with fewer, higher-impact people and better tools?”

The answer is increasingly yes.

As a result, companies are hiring fewer people but expecting more ownership per role.

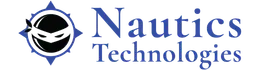

From Role Coverage to Outcome Ownership

Traditional model focused on role coverage:

- Someone to manage

- Someone to coordinate

- Someone to support

Execution-driven hiring focuses on outcome ownership.

Modern job approvals answer:

- What result will this person own?

- What breaks if we don’t hire them?

- How will success be measured in 90 days?

Roles without clear outcomes are quietly disappearing.

Why Generalist Roles Are Shrinking

Generalist roles thrived in growth-at-all-costs environments. In execution-focused organizations, they struggle.

Why?

- Execution requires depth

- Specialists unblock delivery faster

- Clear ownership reduces handoffs

Companies now prefer:

- Engineers who own systems

- QAOps engineers who own quality pipelines

- Marketers who own revenue outcomes

- Consultants who own implementation

This doesn’t mean versatility is irrelevant but impact must be visible.

Hiring Is Now Tied Directly to ROI

In 2026, every hire competes with:

- Automation

- Process redesign

- Internal upskilling

Leaders ask:

- Is hiring the fastest path to impact?

- Is it the most cost-effective option?

- Can we upskill someone internally instead?

This financial discipline has made hiring deliberate and slower but far more effective.

Upskilling Is Replacing External Hiring

Many companies are executing more by transforming existing talent.

Examples include:

- QA engineers becoming QAOps specialists

- Developers learning AI-assisted workflows

- Analysts moving into automation roles

- Managers becoming hands-on operators

Upskilling:

- Reduces ramp-up time

- Lowers cultural risk

- Preserves institutional knowledge

Execution improves without expanding headcount.

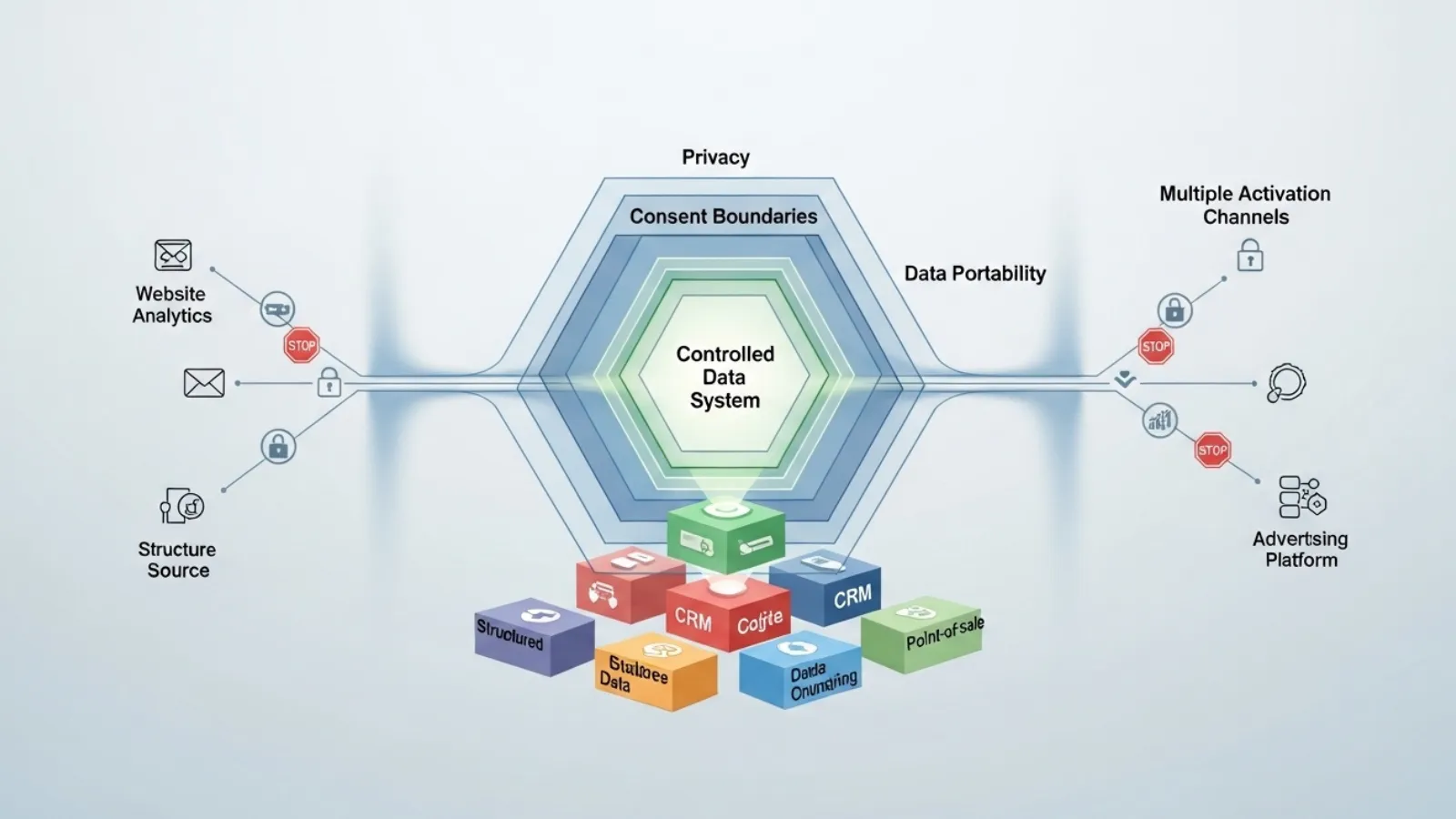

Why Managers Are Also Being Hired Differently

The shift to execution impacts leadership roles as well.

Companies are no longer hiring managers whose primary function is coordination. They want leaders who:

- Can make decisions

- Can remove blockers

- Can deliver outcomes directly

In leaner organizations, managers are closer to the work. Execution-first hiring favors doers who can lead, not overseers who delegate.

Employer Branding Has Become an Execution Signal

In a selective hiring market, candidates evaluate companies as carefully as companies evaluate them.

High-impact candidates look for:

- Clear expectations

- Real ownership

- Evidence of execution

- Technical and operational maturity

Organizations that over-promise and under-deliver struggle to hire execution-oriented talent.

Employer branding now reflects how work actually gets done, not just culture slogans.

The New Hiring Questions Companies Ask

Execution-focused organizations consistently ask:

- What business problem does this role solve?

- How will we measure impact quickly?

- What decisions will this person own?

- How does this role scale with tools and automation?

If answers are vague, hiring stops.

What This Means for Candidates

For professionals, this shift raises the bar but also increases opportunity.

Execution-focused hiring rewards people who:

- Own outcomes

- Work independently

- Leverage tools effectively

- Communicate impact clearly

Job titles matter less than proof of execution.

Those who can show results move faster even in cautious markets.

What This Means for Leaders

If you’re leading a company in 2026, execution-based hiring requires:

- Clear priorities

- Honest assessment of bottlenecks

- Willingness to say no to low-impact roles

- Investment in tools and upskilling

The goal is not to be understaffed.

It’s to be over-leveraged.

Why This Model Is More Resilient

Execution-focused organizations:

- Scale without bloat

- Adapt faster to market shifts

- Control costs more effectively

- Maintain accountability

When conditions change, smaller, execution-oriented teams adjust faster than large, loosely aligned ones.

Final Thoughts: Execution Is the New Hiring Currency

Companies haven’t stopped hiring. They’ve stopped hiring by habit.

In 2026, hiring is no longer about:

- Team size

- Organizational optics

- Future potential alone

It’s about what gets delivered.

Organizations that hire for execution build momentum with fewer people, less friction, and clearer accountability. Those that don’t will continue to grow teams without growing results.

The market has spoken:

Execution beats headcount. Every time. To Discuss more Contact Us