Introduction: Quality Assurance Is No Longer a Phase It’s a System

For years, Quality Assurance lived at the end of the software lifecycle. Code was written, features were “done,” and then QA stepped in to validate what already existed. That model is officially broken.

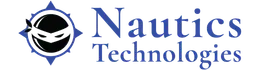

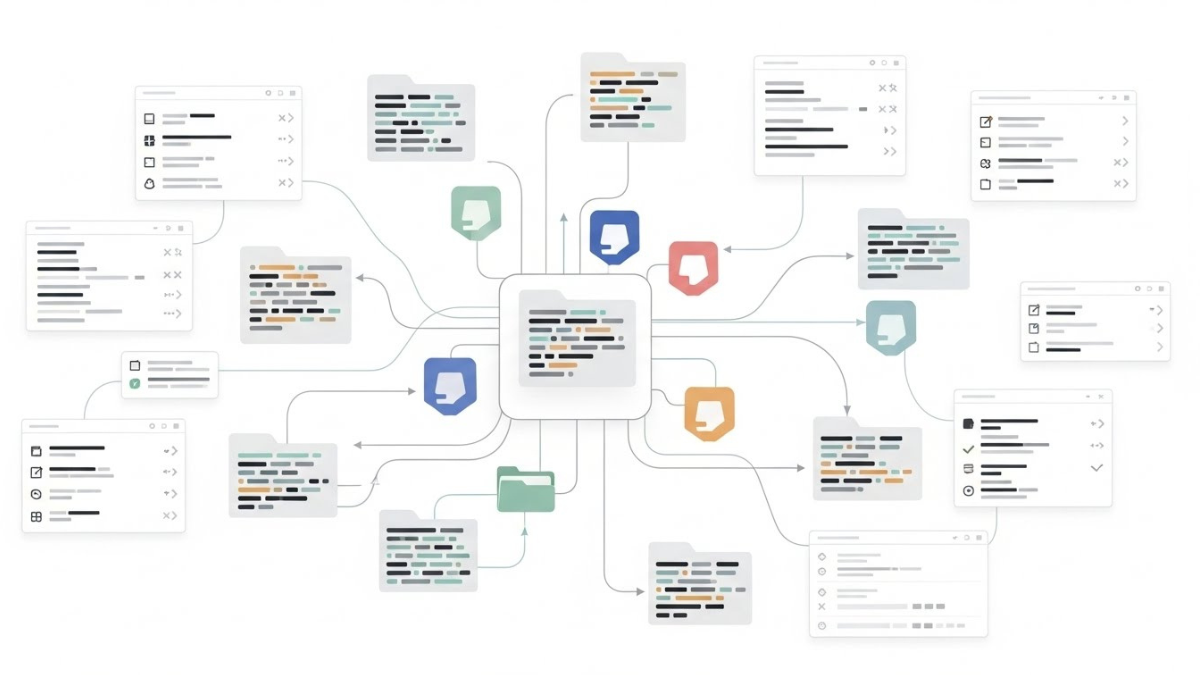

In 2026, speed is non-negotiable. Releases happen daily, sometimes hourly. In this environment, traditional Quality Assurance simply cannot keep up. The result is a fundamental shift: QAOps the integration of quality assurance directly into DevOps pipelines through continuous testing, automation, and real-time feedback.

QAOps isn’t a trend. It’s a survival mechanism.

What Is QAOps Really?

QAOps is not just “more automation” or “testing earlier.” It’s a systemic change in how quality is owned, measured, and delivered.

At its core, QAOps means:

- Testing is continuous, not scheduled

- Quality is everyone’s responsibility, not just QA’s

- Feedback loops are automated and immediate

- Testing lives inside CI/CD pipelines

- Production behavior informs future tests

In short, QAOps treats quality as an operational capability, not a checkpoint.

Why Traditional QA Failed at Scale

1. Testing Happens Too Late

When Quality Assurance is a final gate, defects are discovered after:

- Architectural decisions are locked

- Timelines are compressed

- Fixes are expensive

Late testing increases risk instead of reducing it.

2. Manual Bottlenecks Don’t Scale

Manual regression cycles can’t keep pace with:

- Microservices architectures

- Frequent releases

- Multi-platform applications

Teams either skip testing or accept lower confidence.

3. QA Is Isolated From Delivery

When Quality Assurance works separately from DevOps:

- Test environments drift

- Failures lack context

- Feedback arrives too late

This isolation turns Quality Assurance into a blocker instead of an enabler.

QAOps exists because this model no longer works.

Continuous Testing: The Backbone of QAOps

Continuous testing is the engine that powers QAOps. It ensures that every change is validated automatically, across the lifecycle.

Continuous testing includes:

- Unit tests triggered on every commit

- API and integration tests in pipelines

- UI tests on critical paths

- Performance and security checks

- Monitoring and validation in production

The goal isn’t “100% automation.”

The goal is continuous confidence.

Shift-Left + Shift-Right: QAOps in Practice

QAOps combines two powerful approaches:

Shift-Left Testing

Testing moves earlier into:

- Requirements

- Design

- Development

This reduces defect cost and improves clarity.

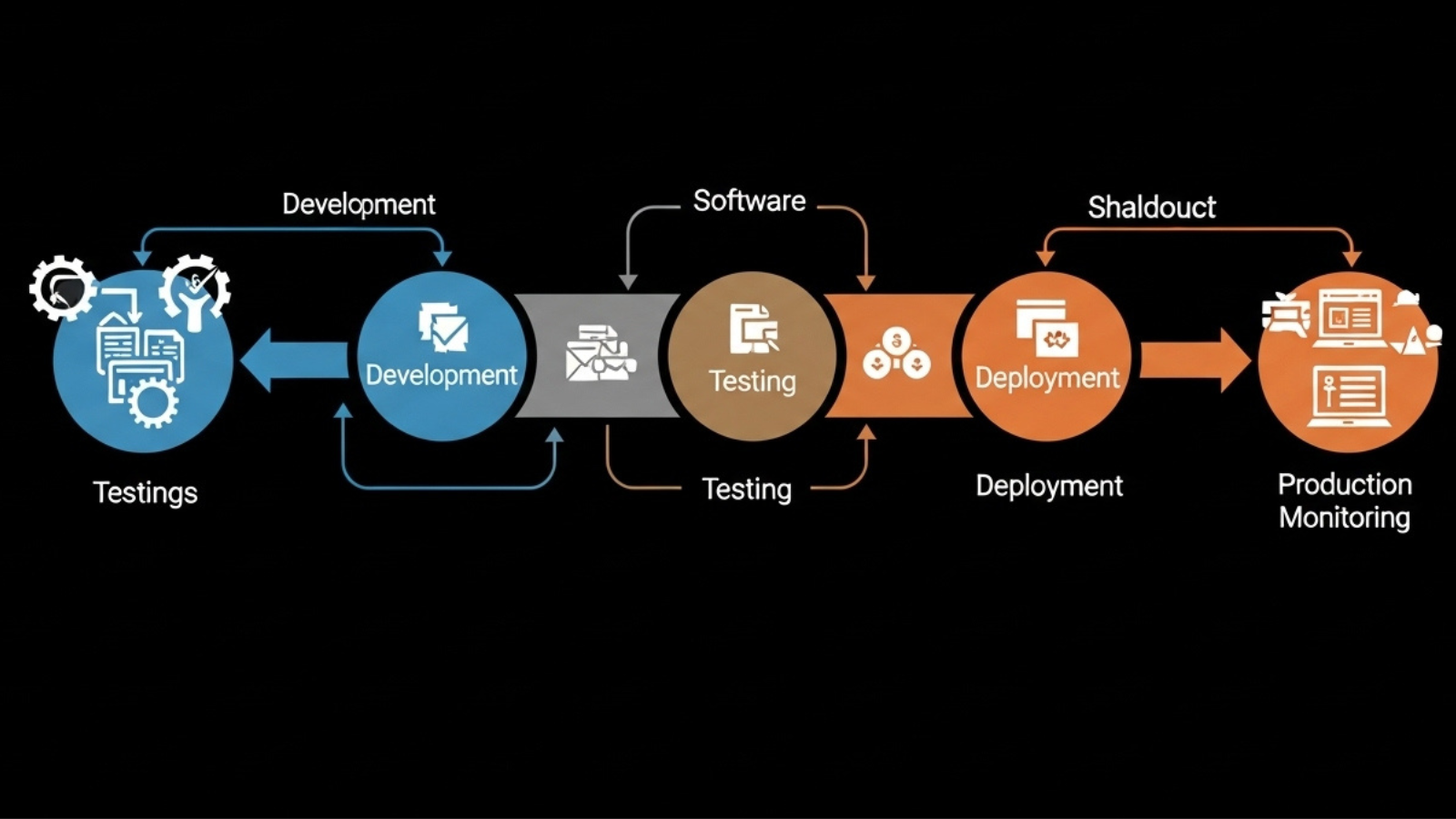

Shift-Right Testing

Quality doesn’t stop at release. QAOps validates:

- Real user behavior

- Performance under load

- Error rates and anomalies

Production becomes a quality signal, not a blind spot.

Together, these approaches close the feedback loop.

The Role of Automation in QAOps

Automation is necessary but not sufficient.

In QAOps, automation must be:

- Stable: Self-healing where possible

- Relevant: Focused on business-critical paths

- Fast: Optimized for pipeline execution

- Observable: Failures provide actionable insight

Bad automation creates noise.

Good automation creates trust.

QAOps teams invest more in maintaining test value than in increasing test count.

AI Is Accelerating QAOps Adoption

AI is a major catalyst for QAOps in 2026.

Used correctly, AI helps with:

- Test case generation

- Test maintenance and self-healing

- Risk-based test prioritization

- Failure analysis and root cause detection

But here’s the hard truth:

AI doesn’t replace QA thinking. It amplifies it.

Teams that rely blindly on AI-generated tests accumulate verification debt. QAOps requires human oversight plus intelligent automation.

QAOps Changes Team Structure and Culture

QAOps is as much cultural as it is technical.

Successful teams:

- Embed Quality Assurance engineers into product squads

- Involve Quality Assurance in sprint planning and design

- Share ownership of test failures

- Treat broken pipelines as production incidents

In QAOps, quality failures are team failures, not QA failures.

Metrics That Matter in QAOps

Traditional Quality Assurance metrics (number of test cases, defects found) are insufficient.

QAOps focuses on:

- Deployment frequency

- Change failure rate

- Mean time to detect (MTTD)

- Mean time to recover (MTTR)

- Escaped defects

These metrics tie quality directly to business impact.

Common Mistakes When Adopting QAOps

Many organizations struggle with QAOps because they:

- Automate bad tests

- Overload pipelines with slow UI tests

- Ignore test data management

- Treat QAOps as a tooling problem

- Skip change management

QAOps fails when it’s implemented mechanically instead of strategically.

How to Start with QAOps (Practically)

If you’re transitioning toward QAOps, start here:

- Stabilize your CI/CD pipeline

- Automate critical paths first

- Integrate Quality Assurance early into delivery planning

- Introduce observability and production feedback

- Measure outcomes, not activity

QAOps is built incrementally not overnight.

What QAOps Means for the Future of QA

Quality Assurance is not disappearing. It’s becoming more powerful.

In 2026, top QA professionals are:

- Quality strategists

- Automation architects

- Risk analysts

- Delivery enablers

QAOps elevates QA from execution to engineering leadership.

Final Thoughts: QAOps Is the New Default

Continuous delivery demands continuous quality. QAOps provides the structure to make that possible without slowing teams down.

Organizations that adopt QAOps:

- Release faster

- Fail safer

- Recover quicker

- Build trust with users

Those that don’t will continue firefighting defects they could have prevented.

Quality hasn’t lost importance.

It has finally gained operational relevance.

If your organization is modernizing its QA strategy and moving toward QAOps and continuous testing, explore software testing and quality consulting at Contact Us