Artificial Intelligence is no longer just a productivity enhancer. According to tech insiders across Silicon Valley and enterprise tech circles, AI is actively reshaping how work gets done from coding and compliance to marketing, finance, and operations.

What’s changing isn’t just speed. It’s structure, roles, and business models.

Let’s break down what this shift means for companies, professionals, and the future of work.

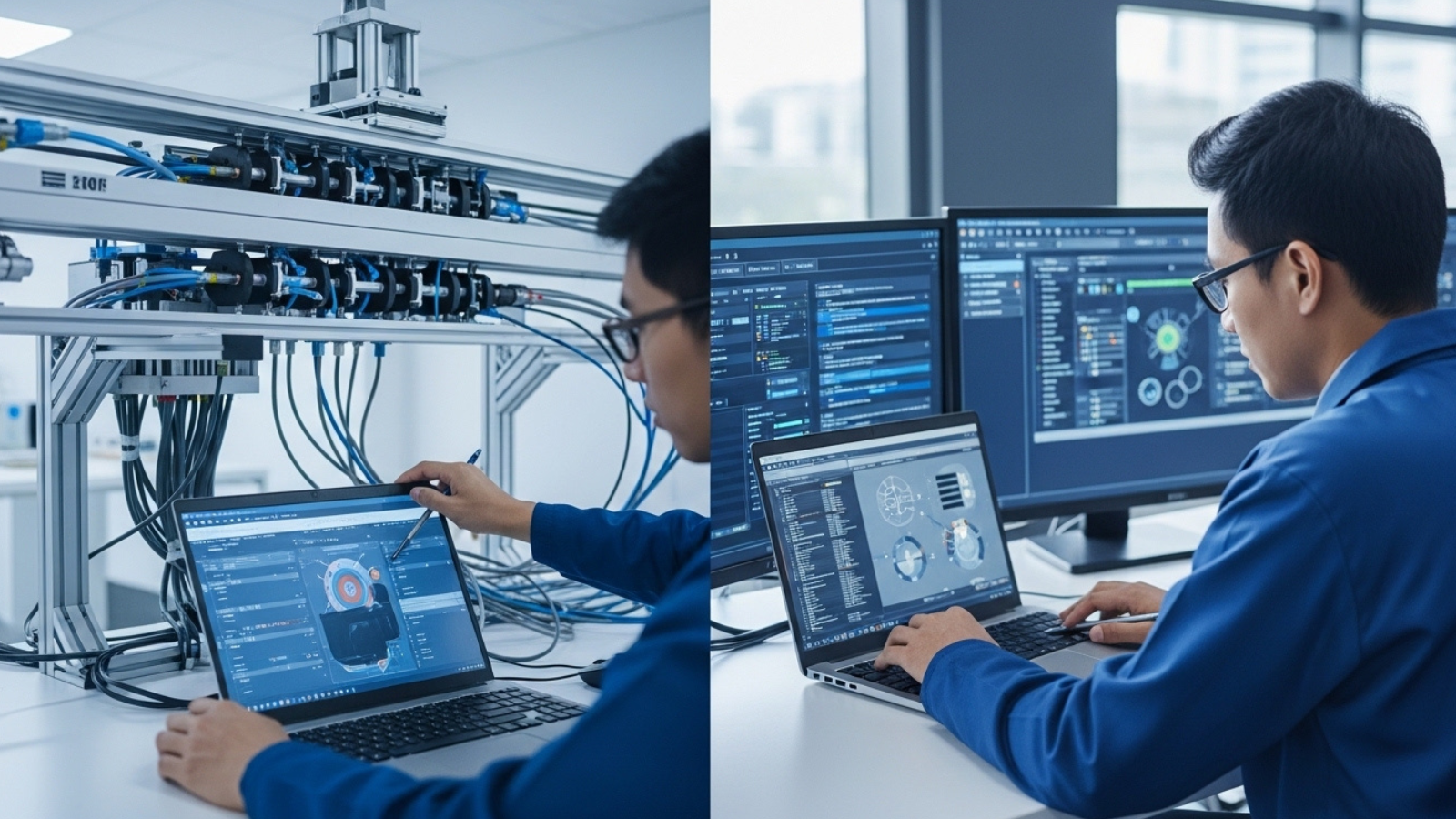

From Assistants to Autonomous Agents

For years, Artificial Intelligent tools acted like digital assistants helping write emails, summarize documents, or suggest code.

Now, companies like OpenAI and Anthropic are pushing Artificial Intelligent systems that can:

- Execute multi step workflows

- Make decisions within set constraints

- Operate across multiple software tools

- Complete tasks with minimal supervision

Instead of answering one prompt, Artificial Intelligent agents can:

- Research → Analyze → Generate report → Send email → Update CRM

That’s not assistance. That’s task execution.

Automation Is Moving Up the Value Chain

Traditional automation (like RPA tools from UiPath) focused on rule-based repetitive tasks data entry, invoice processing, compliance checks.

Today’s Artificial Intelligent systems are automating:

- Drafting legal documents

- Writing production ready code

- Creating marketing campaigns

- Performing financial forecasting

- Supporting medical documentation

This is white-collar workflow automation at scale.

Tech insiders suggest this wave could impact junior and mid-level roles first particularly in:

- Administrative support

- Customer service

- Content production

- Entry level finance

- Junior development

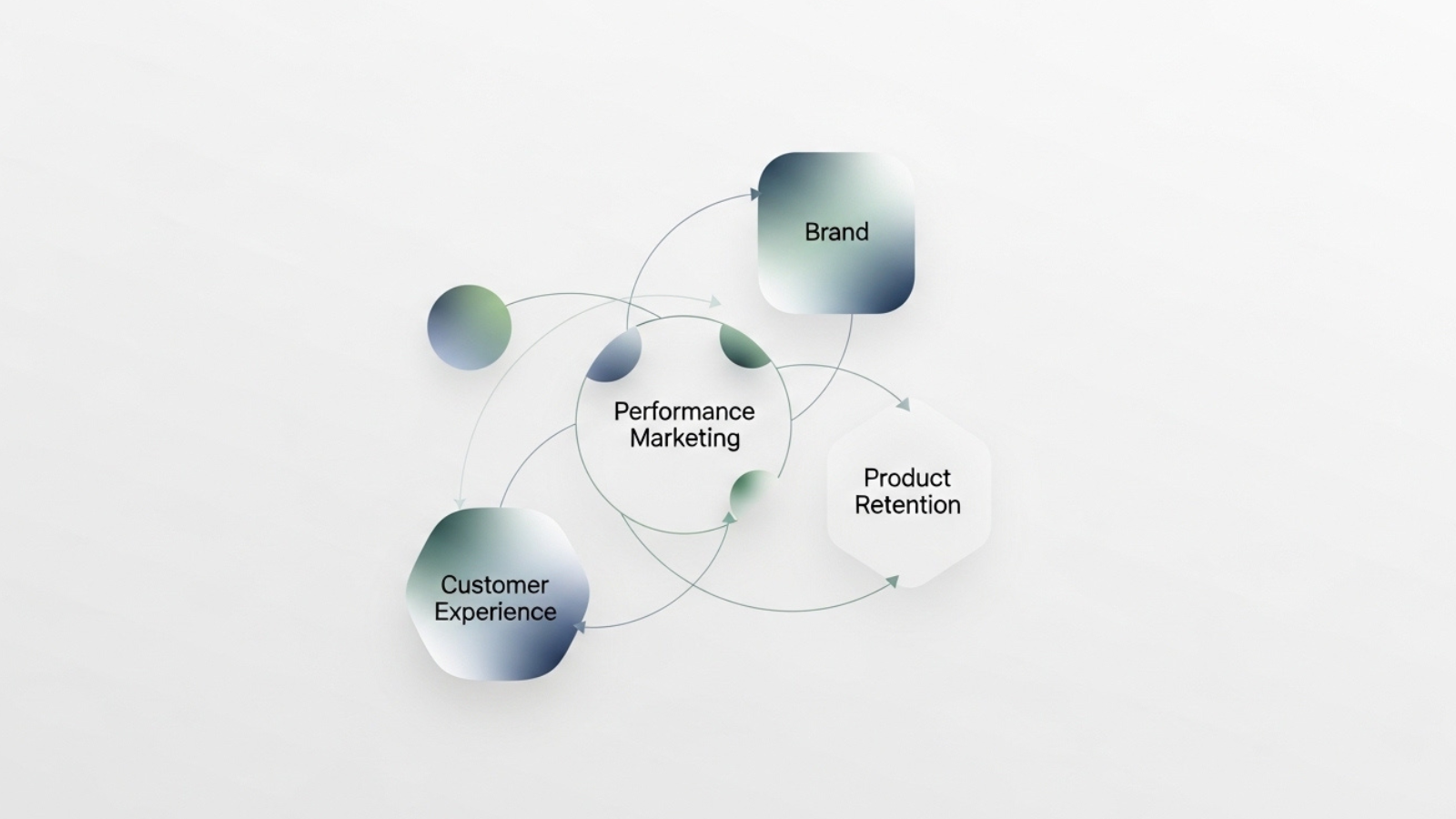

The Shift from SaaS to Artificial Intelligent-Native Platforms

One of the biggest structural changes happening quietly:

It is changing how software is sold and used.

Traditional SaaS:

- Human inputs data

- Software processes

- Human interprets output

Artificial Intelligent native workflow:

- Human sets objective

- Executes workflow

- Human reviews results

This changes:

- Pricing models

- Headcount requirements

- Software stack design

- IT infrastructure planning

Companies are now asking:

“Do we need more tools or smarter automation across tools ?”

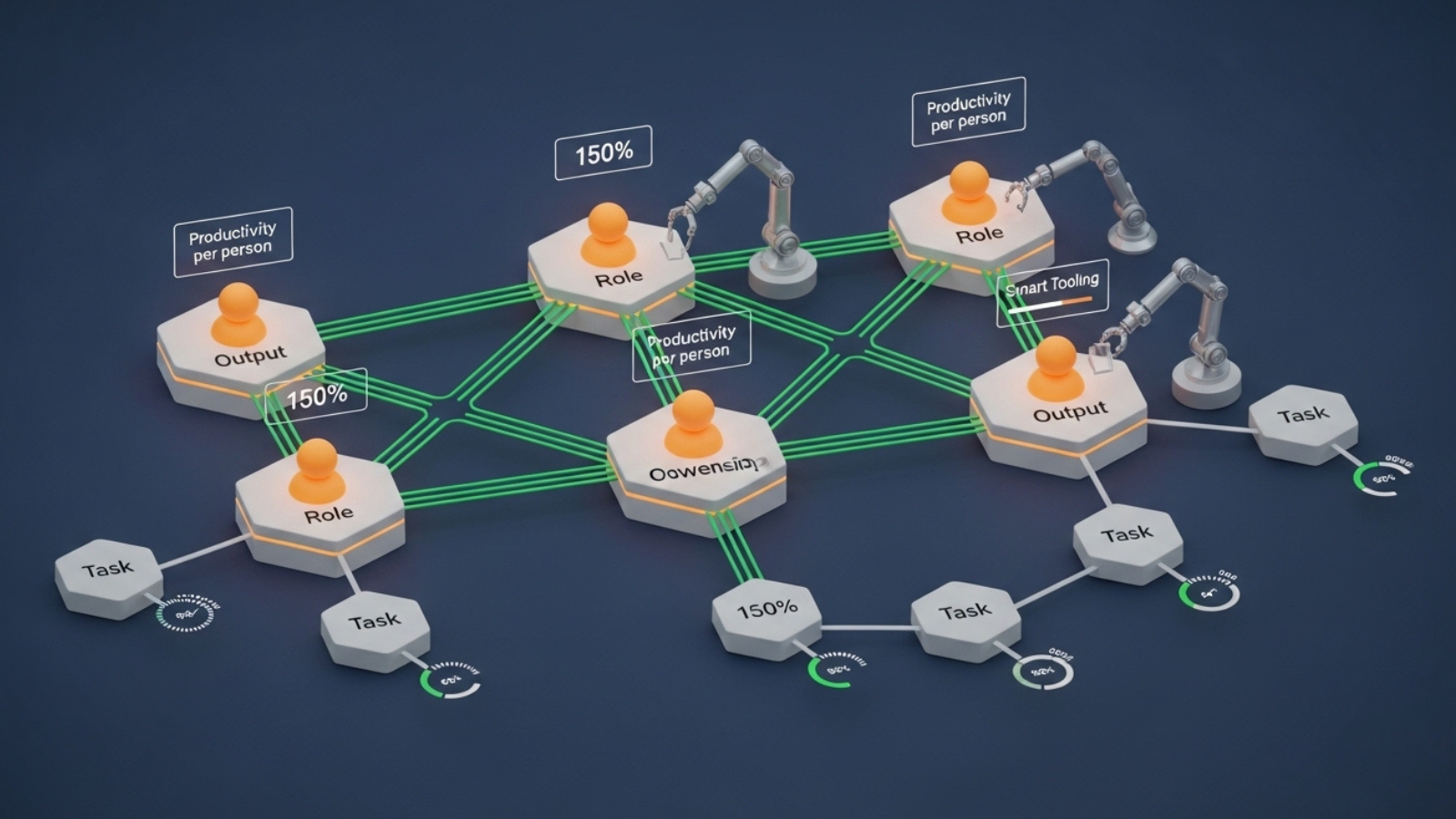

Productivity Gains vs. Workforce Disruption

Tech insiders remain divided on one issue:

Is this transformation net positive or disruptive?

Optimistic View

- Workers become “Artificial Intelligent supervisors”

- Output per employee increases

- Smaller teams achieve enterprise level productivity

- New job categories emerge ( Workflow designer, automation strategist)

Concerned View

- Entry level roles shrink

- Skill gaps widen

- Security & governance risks grow

- Overreliance on imperfect models increases business risk

The truth? Both are likely happening simultaneously.

What This Means for Businesses

Companies that adapt early will:

- Redesign workflows around Artificial Intelligent

- Upskill teams in prompt engineering & automation strategy

- Build governance frameworks

- Shift from tool-centric to outcome-centric operations

Those that resist change risk:

- Slower execution

- Higher operating costs

- Competitive disadvantage

The key question is no longer:

“Should we use Artificial Intelligent?”

It’s:

“Where can it autonomously execute work today?”

The Rise of the Artificial Intelligent-Augmented Professional

The future professional will not compete against it but work alongside it.

Tomorrow’s top performers will:

- Orchestrate it tools

- Design automated workflows

- Validate outputs

- Focus on strategic thinking & relationship-building

In short:

Routine execution becomes automated. Strategic thinking becomes premium. For more Details let’s connect on Contact Us